Understanding Vector Databases for LLM Applications: A Deep Dive

In the rapidly evolving landscape of artificial intelligence, Large Language Models (LLMs) have emerged as transformative tools, capable of generating human-like text, answering complex questions, and performing a myriad of linguistic tasks. However, the true potential of these models is often unlocked not by the models themselves, but by the sophisticated data infrastructure supporting them. A critical component of this infrastructure, especially for enhancing LLM capabilities, is the vector database. For those seeking a deeper Understanding Vector Databases for LLM Applications, this article offers a comprehensive exploration into their mechanics, importance, and wide-ranging implications. This deep dive will unravel how these specialized databases serve as the bedrock for more intelligent, context-aware, and efficient LLM deployments, pushing the boundaries of what AI can achieve.

- What Are Vector Databases, and Why Do LLMs Need Them?

- How Vector Databases Work: The Core Mechanics

- Key Components and Essential Features of Modern Vector Databases

- Real-World Applications: Understanding Vector Databases for LLM Applications

- Advantages and Challenges of Implementing Vector Databases

- The Future of Vector Databases and LLM Applications

- Conclusion: Empowering the Next Generation of AI

- Frequently Asked Questions

- Further Reading & Resources

What Are Vector Databases, and Why Do LLMs Need Them?

At its core, a vector database is a type of database designed to store, manage, and query high-dimensional data, specifically numerical vectors. Unlike traditional relational or NoSQL databases that index scalar values or text strings, vector databases are optimized for similarity search based on the geometric proximity of these vectors in a multi-dimensional space. This distinct capability makes them indispensable partners for Large Language Models. LLMs, while powerful, operate on numerical representations of data. Every piece of text, image, audio, or other modality processed by an LLM is first converted into a numerical vector, known as an embedding. To understand how these models learn such representations, a deep dive into neural networks explained is highly beneficial. These embeddings capture the semantic meaning and contextual relationships of the original data.

The necessity for vector databases arises from the inherent limitations of LLMs when dealing with external, dynamic, or highly specific knowledge. Without a mechanism to access and incorporate up-to-date or proprietary information, LLMs are confined to the knowledge present in their training data, which can quickly become outdated or lack domain-specific nuance. Vector databases bridge this gap by providing an efficient way to store vast collections of these high-dimensional embeddings and retrieve semantically similar ones in real-time. This retrieval capability is foundational for applications requiring the LLM to interact with external knowledge bases, leading to more accurate, relevant, and contextually rich responses.

The Semantic Search Imperative

Traditional keyword-based search, which relies on matching exact terms or their lexical variations, often falls short in understanding the user's true intent or the semantic meaning behind a query. For instance, searching for "cars that save fuel" might not return articles about "efficient automobiles" if the exact keywords aren't present. Semantic search, powered by vector embeddings, transcends this limitation. By converting both the query and the knowledge base content into vectors, a vector database can find documents or data points whose embeddings are "close" in the vector space, signifying semantic similarity, even if they use different vocabulary.

This capability is paramount for LLMs. Imagine asking an LLM a question about a very specific product or a recent event not covered in its training data. Without semantic search, the LLM would likely "hallucinate" an answer or simply state it doesn't know. By querying a vector database with an embedding of the user's question, the LLM can retrieve relevant, semantically similar chunks of information from an external knowledge base. It can then use this retrieved information as context to formulate an accurate and grounded response. This process, often referred to as Retrieval Augmented Generation (RAG), is a cornerstone of modern LLM applications, significantly improving their factual accuracy and reducing the likelihood of generating incorrect or irrelevant information.

Beyond Keyword Matching

The power of vector databases extends far beyond simple semantic search. Because embeddings can represent a wide array of data types—text, images, audio, video frames, user behavior patterns—vector databases enable a holistic approach to data retrieval and understanding. This means an LLM application isn't just limited to searching text documents; it can effectively search across a multi-modal data landscape. For example, an LLM could be tasked with finding an image that visually represents a textual description, or identifying audio snippets similar to a specific musical pattern, all facilitated by the underlying vector representations and the database's ability to efficiently query them.

The underlying principle is that similarity in vector space corresponds to similarity in meaning or content. This fundamental shift from exact matching to conceptual proximity allows for more intuitive and powerful interactions with data. It unlocks capabilities like content recommendation, anomaly detection, plagiarism checking, and even advanced data clustering, all of which can feed into and enhance the performance of LLMs by providing them with richer, more contextually relevant inputs. The ability to manage and query these high-dimensional representations efficiently is what sets vector databases apart and solidifies their role as a foundational technology for next-generation AI.

How Vector Databases Work: The Core Mechanics

Understanding the operational intricacies of a vector database is crucial for appreciating its role in LLM applications. The magic happens through a combination of data representation, spatial indexing, and advanced search algorithms. These elements work in concert to deliver lightning-fast similarity queries across massive datasets.

Embeddings: The Language of Vectors

At the heart of every vector database operation is the concept of an embedding. An embedding is a numerical representation of a piece of data (text, image, audio, etc.) in a high-dimensional vector space. These vectors are typically generated by specialized machine learning models, often neural networks, that are trained to map complex data into a dense vector where semantically similar items are located close to each other.

Example:

The phrase "king" might be embedded as [0.2, 0.4, 0.1, ..., 0.9].

The phrase "queen" might be embedded as [0.1, 0.3, 0.2, ..., 0.8].

The phrase "apple fruit" might be [0.8, 0.1, 0.0, ..., 0.2].

The phrase "car engine" might be [0.0, 0.9, 0.7, ..., 0.1].

Notice how "king" and "queen" are numerically close, reflecting their semantic relationship, while "apple fruit" and "car engine" are far apart. The dimensionality of these vectors can range from tens to thousands (e.g., 768, 1536, or even higher), depending on the embedding model used. The process of generating these embeddings is typically separate from the vector database itself, often involving dedicated embedding models like OpenAI's text-embedding-ada-002 or models from Hugging Face.

Vector Space and Similarity Metrics

Once data is transformed into embeddings, it exists within a multi-dimensional "vector space." In this abstract space, each dimension corresponds to a particular latent feature learned by the embedding model. The core principle is that the geometric distance or angle between two vectors in this space directly correlates with the semantic or contextual similarity of the original data they represent.

To quantify this similarity, vector databases employ various similarity metrics:

- Cosine Similarity: This is one of the most common metrics. It measures the cosine of the angle between two vectors. A cosine similarity of 1 indicates identical vectors (same direction), 0 indicates orthogonality (no semantic relation), and -1 indicates opposite vectors. It's particularly effective when the magnitude of vectors doesn't necessarily convey more meaning, only their direction.

- Euclidean Distance (L2 Distance): This measures the straight-line distance between two points (vectors) in Euclidean space. Shorter distances imply higher similarity. It's sensitive to vector magnitude.

- Dot Product: This measures the projection of one vector onto another. A larger dot product typically indicates greater similarity, especially when vectors are normalized. It's often used interchangeably with cosine similarity for normalized vectors.

The choice of metric depends on the characteristics of the embeddings and the specific application, but they all serve the same goal: finding the "closest" vectors to a given query vector.

Indexing for Efficient Search (ANNS)

Searching for the exact nearest neighbor (Exact Nearest Neighbor Search or ENNS) in a high-dimensional space is computationally intensive, scaling poorly with the number of vectors. For instance, comparing a query vector against millions or billions of stored vectors one by one is impractical for real-time applications. This is where Approximate Nearest Neighbor Search (ANNS) algorithms come into play.

ANNS algorithms are designed to find vectors that are approximately the closest neighbors, sacrificing a tiny bit of recall (the chance of missing a true nearest neighbor) for massive gains in query speed. They achieve this by structuring the vector space in a way that allows for rapid pruning of search areas. Common ANNS techniques include:

- Tree-based methods (e.g., KD-Trees, Ball Trees): These partition the data space hierarchically, allowing efficient traversal to narrow down search regions. However, their performance degrades in very high dimensions (the "curse of dimensionality").

- Locality Sensitive Hashing (LSH): LSH hashes similar items into the same "buckets" with high probability, making it faster to find neighbors by only comparing items within the same bucket.

- Graph-based methods (e.g., HNSW - Hierarchical Navigable Small World, FAISS's IVF_PQ): These are among the most popular and performant. HNSW, for example, builds a multi-layer graph where lower layers contain more connections for fine-grained search, and higher layers have fewer, longer connections for quick traversal to the general vicinity of the target. These graphs allow for greedy searches that quickly converge on approximate nearest neighbors.

- Quantization methods (e.g., Product Quantization): These techniques reduce the dimensionality or precision of vectors, making them smaller and faster to compare.

Vector databases implement one or more of these ANNS algorithms to build an index over the stored embeddings. When a query comes in, the database uses this index to quickly locate the k most similar vectors, drastically reducing the search time from milliseconds to microseconds, even across billions of vectors.

The Query Process

Let's walk through a typical query process for an LLM application using a vector database:

- User Input: A user asks a question, e.g., "What are the benefits of sustainable energy?"

-

Embedding Generation: The LLM application converts this natural language query into a high-dimensional vector embedding using an embedding model (e.g.,

text-embedding-ada-002). This query vector captures the semantic meaning of the question.text User Query: "What are the benefits of sustainable energy?" Query Embedding: [0.15, -0.03, 0.88, ..., 0.42] -

Vector Database Query: The application sends this query embedding to the vector database.

-

Similarity Search: The vector database, leveraging its ANNS index, efficiently searches its vast collection of stored embeddings (representing documents, articles, product descriptions, etc.) to find the k most semantically similar vectors to the query embedding.

text Stored Embeddings (simplified): Doc A: "Advantages of solar power..." -> [0.14, -0.02, 0.87, ..., 0.43] (High similarity) Doc B: "Wind energy's economic impact..." -> [0.16, -0.04, 0.89, ..., 0.41] (High similarity) Doc C: "History of the internal combustion engine..." -> [-0.71, 0.22, 0.05, ..., -0.11] (Low similarity) -

Retrieval of Metadata/Content: Along with the similar vectors, the vector database typically returns associated metadata or identifiers. The application then uses these identifiers to retrieve the original full text or content corresponding to the similar embeddings from a separate data store (like an S3 bucket or a traditional relational database). ```text Retrieved Content:

-

Title: "The Economic and Environmental Benefits of Renewable Energy" Content: "Sustainable energy sources like solar, wind, and hydropower offer numerous advantages..."

-

Title: "Why Invest in Green Energy?" Content: "Investing in green energy not only helps the planet but also provides long-term financial stability..." ```

-

-

Contextual Augmentation (RAG): The retrieved relevant content is then fed into the LLM as additional context alongside the original user query.

- LLM Generation: The LLM processes the original query and the provided context, generating a more informed, accurate, and up-to-date answer. This prevents the LLM from relying solely on its potentially outdated training data.

This sophisticated interplay between embedding models, ANNS algorithms, and the vector database itself is what empowers LLMs to move beyond their static training data and interact dynamically with real-world, current, or proprietary information, leading to significantly enhanced capabilities.

Key Components and Essential Features of Modern Vector Databases

Modern vector databases are highly sophisticated systems designed to handle the demanding requirements of AI applications. Beyond the core mechanics of storing and querying vectors, they incorporate a suite of features that are crucial for scalability, reliability, and usability.

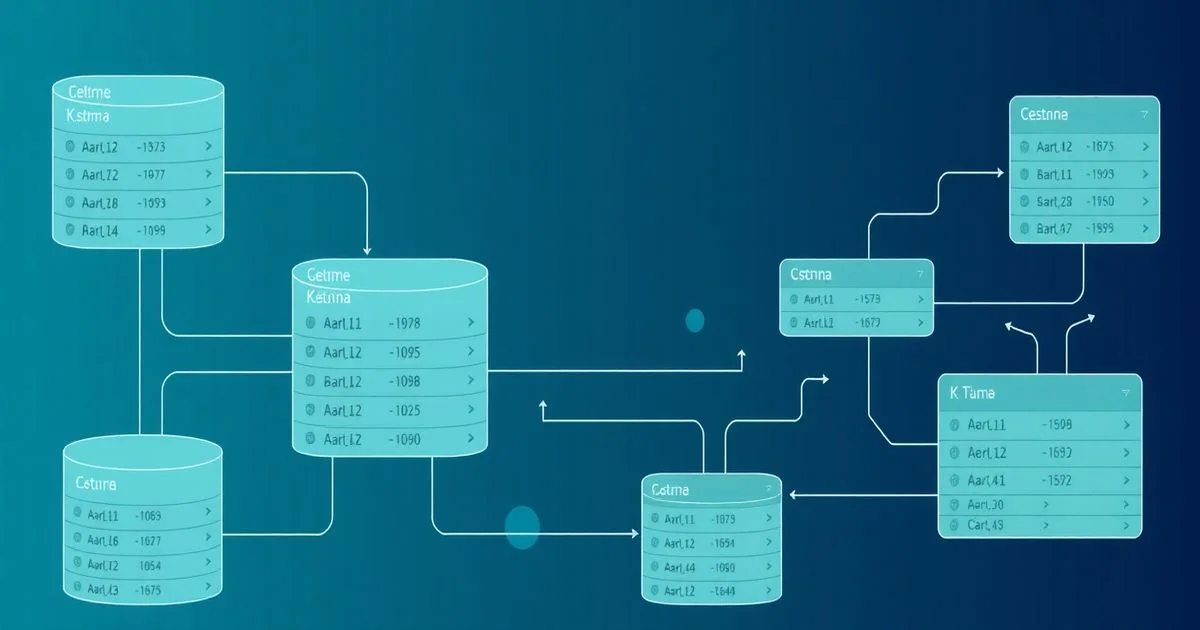

Scalability and Distributed Architecture

Vector databases are built to handle massive datasets, often encompassing billions of high-dimensional vectors. This necessitates a distributed architecture, where data and computational load are spread across multiple nodes or servers. Platforms like Milvus, Pinecone, and Weaviate are examples of vector databases built with cloud-native, distributed architectures from the ground up, capable of scaling to enterprise-level demands, much like the infrastructure explored in what is cloud computing?. Key aspects include:

- Sharding: Dividing the vector index into smaller, manageable chunks (shards) that can be distributed across different nodes. Each shard can independently process queries, increasing parallelization.

- Replication: Creating multiple copies of each shard to ensure high availability and fault tolerance. If one node fails, another replica can take over, preventing service interruption.

- Dynamic Scaling: The ability to add or remove nodes seamlessly to adjust to varying workloads. This elastic scaling ensures that performance remains consistent as data volume or query traffic grows. Many cloud-native vector databases offer auto-scaling capabilities, automatically adjusting resources based on demand.

Real-time Updates and Data Consistency

In many LLM applications, the underlying knowledge base is not static. New documents, articles, or user interactions are constantly being generated, requiring the vector database to be updated in near real-time. This presents challenges for maintaining data consistency and ensuring that the vector index remains accurate. Key aspects include:

- Append-only vs. Mutable Indexes: Some vector databases are optimized for append-only workloads, where new vectors are added but existing ones are rarely modified or deleted. Others support full CRUD (Create, Read, Update, Delete) operations, allowing for modification of existing vectors and their associated metadata.

- Eventual Consistency: In distributed systems, achieving strong consistency (where all nodes always see the most up-to-date data) can be complex and impact performance. Many vector databases opt for eventual consistency, where updates propagate through the system, and all nodes eventually become consistent. For LLM RAG applications, a slight delay in consistency is often acceptable.

- Batch vs. Streaming Updates: Vector databases often support both batch updates (for large, periodic data refreshes) and streaming updates (for continuous, low-latency ingestion of new data), allowing developers to choose the most appropriate method for their specific use case.

Filtering and Metadata Management

While vector similarity search is powerful, it's often insufficient on its own. Users frequently need to narrow down search results based on specific criteria or attributes. This is where metadata management and filtering capabilities become essential.

- Associated Metadata: Vector databases allow developers to store arbitrary metadata alongside each vector. This metadata can include document IDs, timestamps, author names, categories, access permissions, or any other relevant attribute.

Vector for Article A: [embedding_vector]

Metadata for Article A: {

"id": "article_123",

"title": "Quantum Computing Basics",

"author": "Dr. Smith",

"category": "Science",

"published_date": "2023-10-26"

}

- Pre-filtering and Post-filtering:

- Pre-filtering: The database first filters the entire dataset based on metadata criteria (e.g.,

category = 'Science' AND published_date > '2023-01-01') and then performs a vector similarity search only on the filtered subset. This is generally more efficient for exact matches. - Post-filtering: The database performs a vector similarity search on the entire dataset first, retrieves the top k similar vectors, and then filters these results based on metadata. This can be faster if the metadata filter is highly selective on an already small

kset.

- Pre-filtering: The database first filters the entire dataset based on metadata criteria (e.g.,

- Hybrid Search: Combining vector similarity search with traditional keyword or metadata filtering is crucial for robust LLM applications. For example, finding articles about "AI ethics" (semantic search) but only from "trusted sources" (metadata filter).

Hybrid Search Capabilities

The most advanced vector databases offer robust hybrid search capabilities, integrating semantic vector search with traditional keyword-based search and metadata filtering. This allows for a more nuanced and powerful retrieval experience. For instance, a user might search for "financial advice for small businesses in 2023" where "financial advice" is best handled by semantic similarity, "small businesses" can be a keyword, and "2023" is a metadata filter. Effective hybrid search can significantly improve the relevance and precision of retrieved information for LLMs.

Security and Access Control

Given that vector databases often store sensitive or proprietary information (e.g., embeddings of confidential documents), robust security features are non-negotiable.

- Authentication and Authorization: Secure mechanisms to verify user identity and control what actions they can perform (e.g., read, write, delete vectors).

- Data Encryption: Encryption of data at rest (stored on disk) and in transit (over the network) to protect against unauthorized access.

- Role-Based Access Control (RBAC): Assigning permissions based on user roles (e.g., "admin," "developer," "user") to streamline access management.

- VPC Peering/Private Endpoints: For cloud-based services, the ability to connect securely via private networks, bypassing the public internet, adds an extra layer of security.

These essential features collectively transform a basic vector store into a production-ready system capable of powering complex and critical LLM applications in various industries.

Real-World Applications: Understanding Vector Databases for LLM Applications

The combination of LLMs and vector databases unlocks a new generation of intelligent applications across virtually every industry. Their synergy creates systems that are more knowledgeable, personalized, and efficient than ever before.

Semantic Search and Retrieval Augmented Generation (RAG)

This is perhaps the most prominent and impactful application. As discussed earlier, RAG allows LLMs to retrieve relevant, up-to-date, and proprietary information from external knowledge bases. This capability is vital for:

- Enterprise Search: Employees can query internal documentation, codebases, or customer support tickets using natural language, receiving precise answers derived from relevant internal data, rather than generic web search results.

- Customer Support Chatbots: LLM-powered chatbots can provide accurate answers to customer queries by retrieving information from product manuals, FAQs, or past support interactions stored in a vector database, significantly reducing resolution times and improving customer satisfaction. Companies like Zendesk and Intercom are actively exploring or integrating such capabilities.

- Knowledge Management: Researchers, legal professionals, and analysts can quickly find highly specific information within vast libraries of documents, legal precedents, or scientific papers, enhancing their productivity and decision-making.

Personalization and Recommendation Systems

Vector databases are a game-changer for building highly personalized experiences. By embedding user preferences, item characteristics, and past interactions into vectors, systems can recommend content, products, or services that are semantically similar to a user's interests. Examples include:

- E-commerce Product Recommendations: When a user views a product, its embedding can be used to query a vector database of all products, finding similar items in terms of style, function, or target audience. For example, a user viewing a specific running shoe might be recommended similar shoes or complementary running apparel.

- Content Platforms (Netflix, Spotify): By embedding user watch/listen history and content attributes, vector databases can power sophisticated recommendation engines, suggesting movies, music, or news articles that align with a user's nuanced tastes. This moves beyond genre matching to deep semantic similarity.

- Personalized Feeds: Social media or news platforms can use vector databases to curate personalized feeds, prioritizing content that is semantically similar to topics a user has engaged with, leading to higher user engagement.

Anomaly Detection and Fraud Prevention

The ability to identify outliers in a high-dimensional space makes vector databases excellent tools for anomaly detection, a critical application within the broader domain of machine learning. Examples include:

- Financial Fraud: Transaction data (amount, location, merchant, time) can be embedded into vectors. A vector database can then quickly flag transactions that are unusually distant from a user's typical spending patterns or from legitimate transaction clusters, indicating potential fraud.

- Network Security: Network traffic patterns or system logs can be vectorized. Deviations from normal behavior, represented by vectors that are far from the cluster of normal operation, can signal intrusions or attacks.

- Industrial IoT Monitoring: Sensor data from machinery can be embedded. Anomalous readings, indicating potential equipment failure or malfunction, can be detected by identifying vectors that are outliers compared to the historical normal operating range.

Content Moderation and Summarization

Vector databases can significantly enhance LLM capabilities in handling large volumes of content. This includes:

- Automated Content Moderation: By embedding user-generated content (comments, posts, images), vector databases can quickly identify content that is semantically similar to known examples of hate speech, spam, or inappropriate material, flagging it for review or immediate removal. This allows LLMs to focus on more nuanced moderation tasks.

- Document Clustering and Summarization: Large collections of documents can be vectorized and then clustered based on semantic similarity using vector database queries. This helps in identifying recurring themes or grouping related content, which can then be fed into an LLM for more effective summarization of distinct topics.

Code Search and Generation

For developers and engineering teams, vector databases offer powerful tools for managing and generating code. Examples include:

- Semantic Code Search: Instead of keyword-based search that might miss relevant code snippets due to varying variable names or syntactic structures, developers can search for code based on its functionality or intent. For example, searching for "code to connect to a PostgreSQL database and fetch data" will return relevant code snippets even if they don't explicitly use the phrase "PostgreSQL."

- Code Suggestion and Completion: Integrated Development Environments (IDEs) can leverage vector databases to suggest relevant code snippets or complete functions based on the current context and the developer's intent, significantly speeding up development.

- Identifying Redundant or Similar Code: Vectorizing code functions or modules allows for easy identification of duplicates or highly similar code, aiding in refactoring and maintaining a cleaner codebase.

These diverse applications underscore the transformative impact of Understanding Vector Databases for LLM Applications. They are not merely storage solutions but active components that extend the intelligence, accuracy, and utility of AI systems across a vast spectrum of real-world scenarios.

Advantages and Challenges of Implementing Vector Databases

While vector databases offer profound advantages for LLM applications, their implementation and management come with their own set of considerations and challenges. A balanced perspective is crucial for successful deployment.

Performance and Precision Gains

The primary advantage of vector databases lies in their ability to deliver unparalleled performance and precision for similarity search tasks, which are fundamental to LLM augmentation. These benefits include:

- Semantic Understanding: By operating on embeddings, vector databases enable true semantic search, moving beyond brittle keyword matching. This leads to significantly more relevant search results for natural language queries, directly translating to higher-quality context for LLMs.

- Speed at Scale: ANNS algorithms allow for blazing-fast retrieval of nearest neighbors, even across billions of data points. This speed is critical for real-time LLM interactions, such as live chatbots or recommendation systems, where latency directly impacts user experience.

- Enhanced LLM Accuracy and Reduced Hallucinations: By providing LLMs with retrieved, factual context, vector databases drastically improve the accuracy of generated responses and substantially reduce the common problem of LLM hallucinations (generating false information). This makes LLMs more reliable for critical business applications.

- Multi-Modal Capability: Vector databases can store embeddings from various data types (text, image, audio), enabling unified multi-modal search and understanding. This allows LLMs to interact with and generate content based on a richer, more diverse dataset.

Cost and Resource Management

Implementing and operating vector databases, especially at scale, can incur significant costs and require careful resource management. Points to consider:

- Compute Resources: Generating embeddings is compute-intensive, requiring powerful GPUs or TPUs. Storing and querying high-dimensional vectors also demands substantial CPU, memory, and storage, particularly for large indexes.

- Storage Costs: High-dimensional vectors, especially for large datasets, can consume considerable storage space. A billion vectors with 1536 dimensions, for instance, translates to terabytes of data.

- Indexing Overhead: Building and maintaining ANNS indexes requires computational resources and can take time, especially for initial population or large updates. The choice of ANNS algorithm can influence this trade-off between index size, build time, and query speed.

- Managed Services vs. Self-Hosting: While managed services (like Pinecone, Weaviate Cloud, Zilliz Cloud) simplify deployment and scaling, they come with subscription costs. Self-hosting (e.g., Milvus, Qdrant, Chroma, Faiss) offers more control but demands significant operational expertise for setup, maintenance, and scaling.

Data Synchronization and Vector Staleness

Keeping the vector database in sync with the source data and ensuring the freshness of embeddings are non-trivial challenges. Key aspects include:

- Embedding Model Updates: Embedding models are constantly improving. When a new, more performant embedding model is released, re-embedding an entire dataset can be a massive undertaking, requiring significant compute and time.

- Source Data Changes: As the underlying source data (documents, products, user profiles) changes, their corresponding embeddings in the vector database must be updated. Establishing efficient data pipelines for continuous ingestion and re-embedding is critical to prevent "vector staleness" – where embeddings no longer accurately reflect the current state of the data. This often involves change data capture (CDC) mechanisms and robust ETL processes.

- Consistency Trade-offs: In distributed systems, ensuring perfect real-time consistency between the source data and the vector index can be complex, often requiring trade-offs between consistency, availability, and performance.

Integration Complexity

Integrating a vector database into an existing tech stack and an LLM application workflow adds layers of complexity. This involves:

- Data Pipelines: Designing and implementing robust data pipelines to extract, transform, embed, and load data into the vector database is a significant engineering effort. This often involves orchestrators like Apache Airflow or Prefect.

- Orchestration with LLMs: Seamlessly orchestrating the query -> embed -> vector search -> retrieve -> LLM prompt -> generate workflow requires careful architectural design and often custom code. Frameworks like LangChain and LlamaIndex have emerged to simplify this integration, but understanding their nuances is key.

- Schema Design: Deciding what metadata to store alongside vectors, how to structure it, and how to define filtering criteria requires thoughtful schema design.

- Monitoring and Observability: Tools and processes are needed to monitor the health, performance, and accuracy of the vector database and its associated embedding pipelines.

Despite these challenges, the immense benefits in enhancing LLM capabilities often outweigh the complexities, making vector databases a cornerstone of advanced AI deployments.

The Future of Vector Databases and LLM Applications

The rapid evolution of AI guarantees that vector databases, as integral components of LLM applications, will continue to innovate and expand their capabilities. Several key trends are shaping their future trajectory.

Hybrid Architectures and Multi-Modal Embeddings

The future of vector databases will likely see a deeper integration with other database types and an enhanced ability to handle increasingly complex data. Key trends include:

- Convergence with Traditional Databases: We're already seeing a trend where traditional relational databases (like PostgreSQL with

pgvector) and NoSQL databases (like MongoDB, Redis) are integrating vector search capabilities. This convergence allows developers to leverage existing infrastructure and avoid managing separate systems, simplifying data management. Dedicated vector databases, however, will continue to offer superior performance and features for high-scale, vector-native workloads. - Beyond Single-Modal Embeddings: While current applications primarily use text embeddings, the frontier lies in multi-modal embeddings that capture relationships across different data types simultaneously. Imagine a single vector representing both an image and its textual description, or a video clip and its spoken dialogue. Vector databases will evolve to efficiently store, index, and query these richer, multi-modal embeddings, enabling truly unified semantic search across diverse content types. This will allow LLMs to understand and generate content that spans text, vision, and audio seamlessly.

- Enhanced Semantic RAG: Future RAG systems will move beyond simple document chunk retrieval. They will likely incorporate more sophisticated graph-based knowledge representations, reasoning capabilities, and dynamic context selection powered by advanced vector search, leading to even more nuanced and accurate LLM responses.

Open-Source vs. Managed Services

The debate between open-source and managed service offerings will intensify, with both models evolving to meet diverse needs. This includes:

- Open-Source Maturity: Open-source vector databases (e.g., Milvus, Qdrant, Chroma) are gaining maturity, offering robust features, community support, and the flexibility for self-hosting. They will continue to be attractive for organizations with specific privacy requirements, complex custom integrations, or those aiming to avoid vendor lock-in.

- Managed Services Innovation: Managed vector database providers (e.g., Pinecone, Weaviate Cloud, Zilliz Cloud, Google's Vertex AI Vector Search) will continue to innovate on ease of use, scalability, performance, and seamless integration with cloud ecosystems. They will target enterprises seeking reduced operational overhead, guaranteed SLAs, and advanced features like automatic index tuning, serverless scaling, and built-in security.

- Hybrid Deployments: Many organizations may adopt hybrid strategies, using managed services for specific high-volume or critical applications, while self-hosting open-source solutions for experimental projects or internal tools.

The Rise of Edge AI and Local Vector Stores

As LLMs become more efficient and smaller, and privacy concerns grow, the demand for AI processing closer to the data source will increase. Key aspects include:

- Local Embedding Models: Smaller, specialized embedding models that can run efficiently on edge devices (smartphones, IoT devices) are becoming more common.

- On-Device Vector Stores: Lightweight, embedded vector databases or libraries (like

Faissfor local indexing or even smaller, purpose-built solutions) will enable on-device semantic search. This allows LLM applications to access personalized context or perform local inference without constant cloud round-trips, improving latency, privacy, and offline capabilities. Examples include personalized recommendations on a smartphone or local document search within an enterprise laptop. - Federated Learning and Privacy: Vector databases might play a role in federated learning architectures, where embeddings are generated and processed locally, and only aggregated, anonymized insights or model updates are sent to the cloud, further enhancing privacy.

The synergy between advanced embedding techniques, sophisticated ANNS algorithms, and robust, scalable vector database architectures will continue to drive the capabilities of LLM applications into new and exciting territories. The ongoing development in this field promises an era of increasingly intelligent, adaptive, and human-like AI interactions.

Conclusion: Empowering the Next Generation of AI

The journey through the intricate world of vector databases reveals their undeniable importance in shaping the future of artificial intelligence. We've explored their fundamental mechanics, from the transformative power of embeddings to the sophisticated efficiency of Approximate Nearest Neighbor Search algorithms, and delved into the essential features that make them robust and scalable. Crucially, we've seen how Understanding Vector Databases for LLM Applications is not just an academic exercise but a practical necessity for unlocking unprecedented levels of semantic understanding, contextual relevance, and operational efficiency in real-world AI deployments.

From powering highly accurate semantic search and dynamic Retrieval Augmented Generation (RAG) to enabling personalized recommendations, robust fraud detection, and intelligent content moderation, vector databases are the silent engines propelling LLMs beyond their static training data. They equip LLMs with external, up-to-date, and proprietary knowledge, allowing them to answer complex queries with remarkable precision and drastically reducing the incidence of "hallucinations." As AI continues its rapid ascent, the symbiotic relationship between advanced language models and these specialized databases will only deepen. The evolution towards hybrid architectures, multi-modal embeddings, and decentralized, edge-based vector stores promises an even more intelligent, adaptive, and versatile generation of AI applications. For developers, data scientists, and organizations aiming to harness the full potential of LLMs, a comprehensive grasp of vector database technology is no longer optional—it is absolutely essential.

Frequently Asked Questions

Q: What is the main difference between a vector database and a traditional database?

A: A vector database stores and queries high-dimensional numerical vectors based on similarity, optimized for semantic search. Traditional databases, like relational or NoSQL, store scalar values or text, optimized for exact matches or structured queries. This fundamental difference enables vector databases to understand conceptual relationships.

Q: What is RAG and why is it important for LLMs?

A: RAG (Retrieval Augmented Generation) combines an LLM's generative power with an external knowledge base accessible via a vector database. It allows LLMs to retrieve factual, up-to-date information, providing context for more accurate answers and significantly reducing hallucinations. This grounds LLM responses in real-world data.

Q: What are embeddings and how are they created?

A: Embeddings are numerical representations of data (text, images, etc.) in a high-dimensional space, capturing semantic meaning. They are created by specialized machine learning models, typically neural networks, trained to position semantically similar items close to each other in this vector space. These models translate complex data into a format understandable by vector databases.