Optimizing Database Query Performance for Beginners: Master the Basics

In today's data-driven world, the speed and efficiency of applications often hinge on how quickly their underlying databases can retrieve and process information. For anyone diving into database management or mastering web development, understanding the fundamentals of optimizing database query performance for beginners is not just an advantage—it's a necessity. This guide aims to help you master the basics, ensuring your applications run smoothly and your users experience swift, responsive interactions. We'll delve into core concepts and practical strategies to transform slow queries into high-performing ones, setting a strong foundation for your journey in database optimization.

- What Is It? The Crucial Role of Database Performance

- Understanding Query Execution: The Database Engine's Workflow

- Fundamental Strategies for Optimizing Database Query Performance for Beginners

- Advanced Techniques and Best Practices for Optimal Query Performance

- Real-World Impact and Case Studies

- Pitfalls to Avoid and Common Misconceptions

- The Future of Database Query Optimization

- Conclusion

- Frequently Asked Questions

- Further Reading & Resources

What Is It? The Crucial Role of Database Performance

At its core, database performance refers to how efficiently a database system can handle various operations, primarily data retrieval (queries) and data modification (inserts, updates, deletes). When we talk about optimizing this performance, we're aiming to reduce the time it takes for a database to execute a query and return results, while also maximizing its throughput—the number of transactions it can process per unit of time. This efficiency directly impacts user experience, application responsiveness, and operational costs.

Imagine an e-commerce website where a user searches for products. If the database query for this search takes several seconds, the user is likely to become frustrated and abandon the site. Conversely, a query that returns results in milliseconds provides a seamless and satisfying experience. This scenario highlights the real-world implications of poor versus optimized database performance. Slow queries can lead to:

- Poor User Experience: Long loading times, timeouts, and unresponsive applications.

- Reduced Productivity: Employees waiting for reports or data to load.

- Increased Infrastructure Costs: Over-provisioning hardware to compensate for inefficient queries, rather than fixing the queries themselves.

- Scalability Issues: Difficulty handling increased user load or data volumes.

Understanding the "what" of database performance is the first step towards addressing the "how." It's about recognizing that every millisecond counts and that the cumulative effect of many small optimizations can lead to significant gains. Data from studies, such as those by Google and Amazon, consistently show that even small delays (e.g., 100-200ms) can negatively impact user engagement and conversion rates. For instance, Google found that a 500ms delay in search results led to a 20% drop in traffic, underscoring the critical nature of performance.

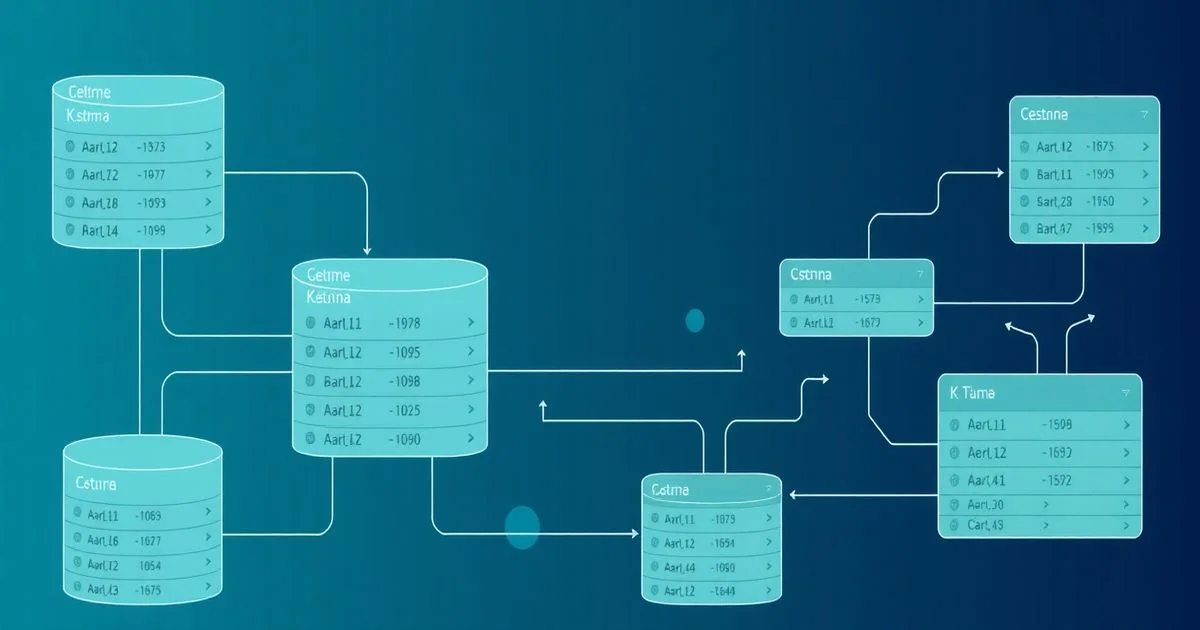

Understanding Query Execution: The Database Engine's Workflow

Before we can optimize, it's essential to understand how a database engine processes a query. Think of a database query as an instruction given to a highly efficient, but often literal, librarian. The librarian (database engine) needs to understand your request, figure out the best way to find the books (data), and then present them to you. This process typically involves several key stages:

-

Parsing: The database engine first receives your SQL query (e.g.,

SELECT * FROM Users WHERE country = 'USA';). It then parses this query, much like a compiler parses code. It checks for syntax errors, verifies that the tables and columns mentioned exist, and ensures the query is semantically correct. If there are any grammatical mistakes in your SQL, this is where they're caught. -

Optimization: This is arguably the most critical stage for performance. The query optimizer, a sophisticated component of the database engine, takes the parsed query and generates multiple possible execution plans. Each plan represents a different strategy for fetching the requested data. For example, should it scan the entire

Userstable? Or use an index on thecountrycolumn? Or perhaps joinUserswith another table first? The optimizer evaluates these plans based on various factors, including:- Table statistics: Information about the data distribution within tables and indexes (e.g., how many unique values are in the

countrycolumn, how many rows are in theUserstable). - Available indexes: Which indexes exist and how they might speed up data access.

- Data volume: The estimated number of rows that will be processed. The optimizer's goal is to select the plan with the lowest estimated cost (typically measured in terms of I/O operations and CPU time).

- Table statistics: Information about the data distribution within tables and indexes (e.g., how many unique values are in the

-

Execution: Once the optimizer selects the "best" plan, the query executor takes over. It executes the plan, performing the actual data retrieval from disk or memory, filtering rows, performing joins, and sorting results as specified in the query. This stage involves interacting with the storage engine to fetch the raw data.

Analogy:

Imagine you've asked a librarian to "find all books written by authors from France."

- Parsing: The librarian understands "books," "authors," "France." They verify these categories exist in their system.

-

Optimization: The librarian considers various approaches:

- Plan A: Go through every single book in the library, check its author, then check the author's nationality. (Full table scan)

- Plan B: Go to the "Author Index," find all authors from France, then look up their books. (Using an index)

- Plan C: If there's a special section for "French Authors," go straight there. The librarian quickly estimates which plan will be fastest based on their knowledge of the library's layout and indexes.

-

Execution: The librarian then physically goes to the shelves, retrieves the books according to the chosen plan, and brings them to you.

Understanding this workflow demystifies why certain query changes or database structures (like indexes) have such a profound impact on performance. It's all about guiding the optimizer to choose the most efficient path.

Fundamental Strategies for Optimizing Database Query Performance for Beginners

Achieving optimal database performance starts with mastering several fundamental strategies. These aren't complex hacks but rather sound principles that, when applied consistently, significantly enhance query speed and overall database health. This section will focus on the most impactful areas for beginners, forming a solid groundwork for further exploration.

Indexing: Your Database's Speed Lanes

Indexes are perhaps the most powerful tool in a database administrator's or developer's arsenal for improving query performance. Think of a database index like the index in the back of a textbook. Instead of reading the entire book to find every mention of "database," you go to the index, find "database," and it points you directly to the relevant page numbers. Similarly, a database index allows the database engine to locate data rows without having to scan the entire table.

How Indexes Work:

When you create an index on one or more columns of a table, the database system builds a separate data structure (most commonly a B-tree) that stores a sorted list of the values from the indexed columns, along with pointers to the actual data rows in the table. When a query targets an indexed column in its WHERE clause, JOIN condition, or ORDER BY clause, the database can use this sorted index to quickly find the required rows, much faster than a full table scan.

Types of Indexes:

- Primary Key Index: Automatically created when you define a primary key for a table. It ensures uniqueness and provides rapid access to individual rows. Every table should have a primary key.

- Unique Index: Similar to a primary key index but allows null values (depending on the database system) and can be created on columns that are not the primary key. It enforces uniqueness on the indexed column(s).

- Non-Unique Index: The most common type, created on columns frequently used in

WHERE,JOIN, orORDER BYclauses to speed up data retrieval. - Clustered Index: (Specific to some databases like SQL Server) Determines the physical order of data rows in the table. A table can have only one clustered index, as the data can only be physically stored in one order. Often, the primary key is chosen as the clustered index. If no clustered index is explicitly defined, SQL Server often uses the primary key automatically. Its main benefit is speeding up range queries, as physically adjacent data rows are logically adjacent.

- Non-Clustered Index: A separate structure that contains the indexed columns and pointers to the actual data rows. A table can have multiple non-clustered indexes.

When to Use Indexes:

- Columns in

WHEREclauses: If you frequently filter data based on a column (e.g.,WHERE status = 'active'). - Columns in

JOINconditions: Foreign key columns are prime candidates for indexing. - Columns in

ORDER BYandGROUP BYclauses: Indexes can help avoid costly sorting operations. - Columns with high cardinality: Columns with a large number of unique values (e.g.,

email_address,product_id). Indexing low-cardinality columns (e.g.,genderwith two values) is generally less effective.

Trade-offs:

While indexes significantly speed up read operations (SELECTs), they come with costs:

- Storage Space: Indexes consume disk space.

- Write Performance Overhead: Every time data is inserted, updated, or deleted in an indexed column, the index itself must also be updated. Too many indexes can slow down

INSERT,UPDATE, andDELETEoperations.

The key is to strike a balance: index what's necessary, but don't over-index. Analyze your query patterns to identify the most critical columns.

Effective Query Writing: Crafting Efficient SQL

The way you write your SQL queries has a monumental impact on performance, often more so than any other factor. Even with perfect indexing and schema design, a poorly written query can cripple performance. Here are some critical guidelines for beginners:

-

Select Only Necessary Columns:

- Bad:

SELECT * FROM Orders;(If you only need customer name and order date). - Good:

SELECT customer_name, order_date FROM Orders;SELECT *retrieves all columns, including potentially large text fields or binary data that your application might not need. This increases network traffic, memory usage on both the server and client, and disk I/O. Be explicit about the columns you require.

- Bad:

-

Use

WHEREClauses Effectively:- The

WHEREclause is your primary tool for filtering data and is crucial for utilizing indexes. - Avoid functions on indexed columns in

WHEREclauses:- Bad:

SELECT * FROM Users WHERE YEAR(registration_date) = 2023;(This prevents the database from using an index onregistration_datebecause it has to calculateYEAR()for every row). - Good:

SELECT * FROM Users WHERE registration_date BETWEEN '2023-01-01' AND '2023-12-31';(This allows an index onregistration_dateto be used).

- Bad:

- Be specific: Narrow down your result set as much as possible at the earliest stage.

- The

-

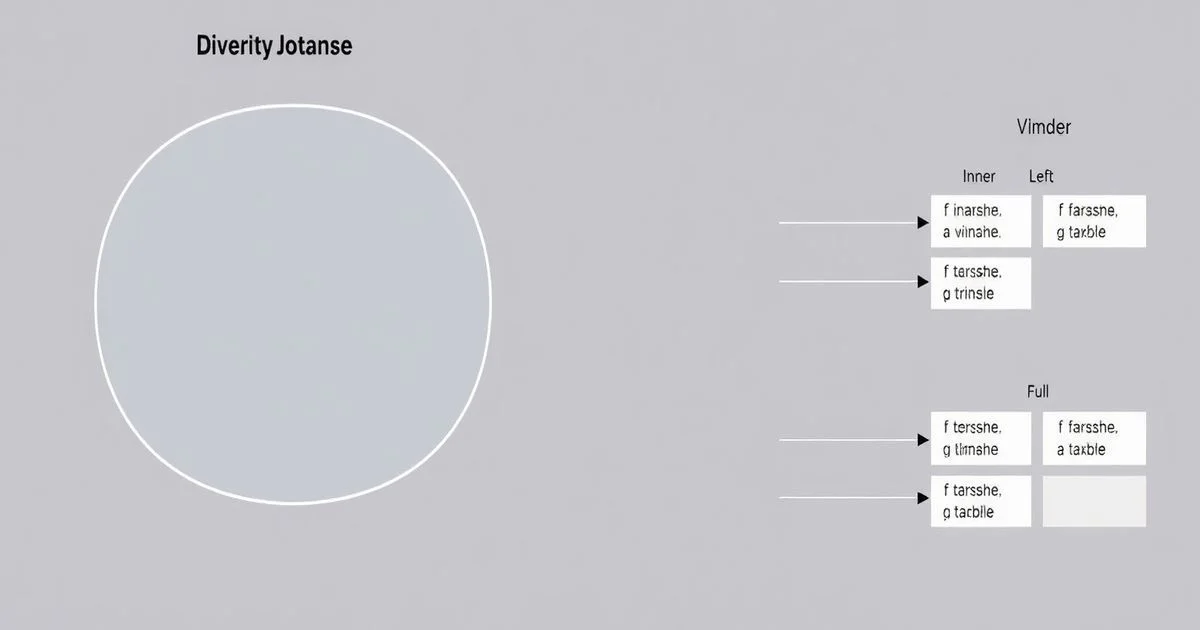

Understand

JOINTypes and Their Impact:INNER JOINis typically the most performant as it only returns rows where there's a match in both tables.LEFT JOIN(orLEFT OUTER JOIN) returns all rows from the left table and matching rows from the right. If no match, NULLs are returned for right table columns. This can be slower if the left table is very large and the join condition is not optimized.- Ensure

JOINcolumns are indexed: This is critical for fast join operations, especially on large tables.

-

Prefer

JOINs Over Subqueries for Filtering (Often):- While subqueries have their place, complex subqueries, especially in

SELECTorWHEREclauses, can sometimes be less efficient than equivalentJOINoperations, particularly for older database optimizers. -

Example (potentially less efficient subquery):

sql SELECT customer_name FROM Customers WHERE customer_id IN (SELECT customer_id FROM Orders WHERE order_date = '2023-10-26'); -

Equivalent (often more efficient) JOIN:

sql SELECT DISTINCT C.customer_name FROM Customers C JOIN Orders O ON C.customer_id = O.customer_id WHERE O.order_date = '2023-10-26';The database optimizer is typically very good at optimizing joins. However, always check the execution plan for your specific query.

- While subqueries have their place, complex subqueries, especially in

-

Use

LIMITfor Pagination:- When fetching a subset of results for pagination (e.g., "show me results 11-20"), use

LIMIT(andOFFSETif applicable) to retrieve only the required chunk. - Example:

SELECT product_name FROM Products ORDER BY price DESC LIMIT 10 OFFSET 20;(Gets products 21-30). This avoids fetching and sorting millions of rows only to discard most of them.

- When fetching a subset of results for pagination (e.g., "show me results 11-20"), use

-

Avoid

SELECT DISTINCTwhenGROUP BYor other methods suffice:DISTINCTcan be a costly operation as the database must sort and remove duplicate rows from the entire result set.- If you're using

DISTINCTon a column that is part of yourGROUP BYclause anyway, it's often redundant. - Consider if

EXISTSorINwith a subquery, or a well-indexedJOIN, can achieve the same result withoutDISTINCT's overhead.

By adopting these habits in your SQL writing, you'll naturally guide the database optimizer towards more efficient execution plans, leading to significant performance gains.

Schema Design Principles: The Foundation of Performance

An optimized database starts with a well-designed schema. Just as a strong building needs a solid foundation, a high-performing database relies on a logical, efficient structure. Poor schema design can negate the benefits of indexing and well-written queries.

-

Normalization vs. Denormalization:

- Normalization: The process of organizing the columns and tables of a relational database to minimize data redundancy and improve data integrity. It typically involves breaking down large tables into smaller, related tables (e.g., separating customer details from their orders).

- Pros: Reduces data redundancy, improves data integrity, easier to maintain and update.

- Cons: Often requires more

JOINoperations to retrieve complete data, which can slow down read performance for complex queries.

- Denormalization: Intentionally introducing redundancy into a database schema to improve read performance. This might involve duplicating data across tables or creating aggregated columns.

- Pros: Faster read performance (fewer

JOINs), simpler queries for common reports. - Cons: Increased data redundancy, higher risk of data inconsistency, more complex write operations.

- Pros: Faster read performance (fewer

-

Beginner's Rule:

Start with a normalized design (e.g., 3rd Normal Form) to ensure data integrity. Only consider denormalization for specific tables or columns after identifying a performance bottleneck that can't be solved by indexing or query tuning. Premature denormalization can lead to more problems than it solves.

- Normalization: The process of organizing the columns and tables of a relational database to minimize data redundancy and improve data integrity. It typically involves breaking down large tables into smaller, related tables (e.g., separating customer details from their orders).

-

Choosing Appropriate Data Types:

- Use the smallest possible data type that can accurately store the data:

- For integer IDs,

INTis usually sufficient,BIGINTonly if necessary. AvoidVARCHARfor numbers. - For fixed-length strings (e.g., postal codes of a specific format),

CHARcan be more efficient thanVARCHARin some systems, thoughVARCHARis often preferred for its flexibility. BOOLEANfor true/false values, notTINYINT(0 or 1).DATE,TIME,DATETIME,TIMESTAMPfor dates/times, notVARCHAR.

- For integer IDs,

-

Why it matters:

Smaller data types require less storage space (on disk and in memory), which means the database can fetch more rows into memory at once, reducing I/O and improving query speed. It also impacts index size and efficiency.

- Use the smallest possible data type that can accurately store the data:

-

Use Primary Keys and Foreign Keys:

- Primary Keys (PKs): Every table should have a primary key, ideally a simple, non-nullable, unique identifier. PKs are automatically indexed and are fundamental for fast data retrieval and ensuring data integrity.

- Foreign Keys (FKs): Enforce referential integrity between tables (e.g., ensuring an order can only belong to an existing customer). More importantly for performance, FKs are frequently used in

JOINconditions, making them excellent candidates for indexing. Always index foreign key columns.

-

Avoid Storing Large Binary Objects (BLOBs) Directly:

- If your application needs to store large files (images, videos, documents), consider storing them in a file system (e.g., AWS S3, local storage) and only storing the path/URL in the database.

- Storing large BLOBs directly in the database can bloat table sizes, slow down backups, and significantly degrade performance when fetching rows that contain these large objects, even if you don't need the BLOB itself.

By paying attention to these schema design principles from the outset, you build a robust and performant database foundation that will serve your application well as it grows.

Advanced Techniques and Best Practices for Optimal Query Performance

Once you've grasped the fundamentals, you can explore more advanced techniques to squeeze even more performance out of your database. These often involve deeper analysis and configuration.

Analyzing Query Execution Plans: Unveiling Bottlenecks

The query execution plan is an invaluable tool for understanding how your database processes a query and, crucially, for identifying performance bottlenecks. It's the "report card" from the query optimizer, detailing the steps it will take. Most relational database systems (PostgreSQL, MySQL, SQL Server, Oracle) offer commands to display these plans.

How to Access and Interpret:

- PostgreSQL:

EXPLAIN ANALYZE SELECT ...; - MySQL:

EXPLAIN SELECT ...; - SQL Server:

SET SHOWPLAN_ALL ON; GO; SELECT ...; GO; SET SHOWPLAN_ALL OFF;or use the graphical execution plan in SSMS.

The plan will show operations like:

- Sequential Scan (or Table Scan): Reading every row in a table. This is often a sign of a missing index or an unoptimizable query.

- Index Scan (or Index Seek): Using an index to quickly find specific rows. This is generally good.

- Hash Join / Nested Loops Join / Merge Join: Different algorithms for joining tables. Understanding which is used can indicate if your join conditions are efficient.

- Sort: Operations that require sorting a large dataset can be expensive, especially if not supported by an index.

- Filter: Applying

WHEREclause conditions.

Key things to look for in an execution plan:

- High-cost operations: Identify operations with high estimated costs (CPU, I/O) or actual execution times.

- Full Table Scans: If a large table is being scanned sequentially instead of using an index for a selective query, that's a red flag.

- Temporary tables/files: Indications that the database is resorting to creating temporary tables on disk for sorting or grouping, which is slow.

- Row estimates vs. actual rows: A significant discrepancy can mean outdated statistics, leading the optimizer to choose a poor plan.

By regularly examining execution plans for your critical queries, you gain insight into the database's thinking and can pinpoint exactly where optimizations are needed.

Caching Strategies: Keeping Hot Data Handy

Caching involves storing frequently accessed data in a faster, more accessible location (usually memory) than its primary storage (disk). This significantly reduces the need to hit the slower disk, speeding up subsequent requests for the same data.

-

Database-Level Caching:

- Most modern database systems have built-in caching mechanisms, such as a buffer pool or shared buffer. This cache stores data blocks and query results that have been recently accessed. The larger and more efficiently configured this cache, the more data can be served from memory, drastically reducing disk I/O.

- Query Cache (MySQL, deprecated): Some databases used to have a query cache that stored the exact results of

SELECTstatements. However, this is largely deprecated or removed in newer versions (e.g., MySQL 8.0) due to contention issues and difficulty in invalidating results when data changes. Modern optimizers and buffer pools are generally more effective.

-

Application-Level Caching:

- Your application can implement its own caching layer using in-memory data stores like Redis or Memcached.

-

How it works:

When the application needs data, it first checks the cache. If the data is found (a "cache hit"), it's returned immediately. If not (a "cache miss"), the application queries the database, retrieves the data, and then stores it in the cache for future requests before returning it to the user.

-

Use Cases: Frequently accessed, relatively static data (e.g., product catalogs, user profiles, configuration settings).

-

Challenges:

Cache invalidation (ensuring cached data is always fresh) and cache consistency (ensuring all application instances see the same cached data) are complex challenges that need careful design.

By intelligently deploying caching at both the database and application layers, you can significantly offload your database and serve data at lightning speed for repeat requests.

Database Configuration Tuning: Beyond the Defaults

Out-of-the-box database configurations are designed for broad compatibility, not necessarily for peak performance for your specific workload. Tuning configuration parameters can unlock significant gains. This often requires a deeper understanding of your database system and workload characteristics.

Common Parameters to Consider (examples, specific names vary by DB):

- Memory Allocation:

shared_buffers(PostgreSQL),innodb_buffer_pool_size(MySQL): Controls the amount of memory allocated for caching data blocks. This is often the single most important parameter.work_mem(PostgreSQL),sort_buffer_size(MySQL): Memory allocated for internal sort operations.

- Concurrency:

max_connections: The maximum number of concurrent client connections.max_locks_per_transaction(PostgreSQL): Number of locks a single transaction can acquire.

- I/O Settings:

wal_buffers(PostgreSQL),innodb_log_file_size(MySQL): Size of write-ahead log buffers/files.

- Query Optimizer Settings:

- Parameters related to optimizer costs (e.g.,

seq_page_cost,random_page_costin PostgreSQL), though these are generally left at defaults unless you're an expert.

- Parameters related to optimizer costs (e.g.,

Important Note:

Modifying database configuration parameters without understanding their impact can lead to instability or even data corruption. Always test changes in a staging environment before applying them to production, and back up your configuration files. Consult your database system's official documentation for detailed guidance.

Regular Maintenance: Keeping the Engine Running Smoothly

Databases, like any complex system, require regular maintenance to operate at peak efficiency. Neglecting maintenance can lead to performance degradation over time.

-

Updating Statistics:

- The query optimizer relies heavily on statistics about the data distribution within tables and indexes. If these statistics are outdated (e.g., after many inserts/updates/deletes), the optimizer might choose inefficient execution plans.

-

Action:

Regularly run commands like

ANALYZE(PostgreSQL),ANALYZE TABLE(MySQL), orUPDATE STATISTICS(SQL Server) to refresh these statistics. Many databases do this automatically, but manual intervention might be needed for highly volatile tables.

-

Index Rebuilding/Reorganizing:

- Over time, indexes can become fragmented, meaning their physical storage order no longer matches their logical order. This can lead to inefficient disk I/O.

-

Action:

Periodically rebuild or reorganize indexes.

- Rebuild: Drops and recreates the index, removing fragmentation and updating statistics. More resource-intensive.

- Reorganize: Defragments the index in place. Less resource-intensive but might not achieve the same level of optimization as a rebuild.

- The need for this varies by database system and workload. Some modern databases handle fragmentation more efficiently.

-

Vacuuming (PostgreSQL):

- PostgreSQL uses a Multi-Version Concurrency Control (MVCC) architecture. When rows are updated or deleted, the old versions aren't immediately removed; they become "dead tuples."

VACUUMfrees up space occupied by dead tuples and prevents transaction ID wraparound issues. -

Action:

AUTOVACUUMis usually enabled and handles this automatically, but understanding its role is important for troubleshooting.

- PostgreSQL uses a Multi-Version Concurrency Control (MVCC) architecture. When rows are updated or deleted, the old versions aren't immediately removed; they become "dead tuples."

-

Log File Management:

- Ensure database transaction logs (e.g.,

WALin PostgreSQL, redo logs in Oracle) don't grow excessively large and are properly backed up and truncated. Unmanaged logs can consume vast disk space and impact performance during recovery.

- Ensure database transaction logs (e.g.,

Implementing a consistent database maintenance schedule is crucial for sustained optimal performance and database health, much like applying core principles of effective time management to any complex task.

Real-World Impact and Case Studies

Optimizing database query performance isn't just an academic exercise; it has tangible, significant impacts in the real world. From saving millions in infrastructure costs to dramatically improving user satisfaction, the benefits are clear.

Case Study 1: E-commerce Product Search Optimization

An online retail giant was experiencing slow product searches, with average response times of 3-5 seconds for complex queries involving multiple filters and sorting. This led to high bounce rates and abandoned carts.

- Challenge: A large product catalog (millions of items) and complex

JOINs acrossproducts,categories,attributes, andinventorytables. - Solution:

- Analyzed Execution Plans: Identified full table scans on large

attributeandinventorytables. - Strategic Indexing: Created composite indexes on frequently filtered and joined columns (e.g.,

(category_id, price_range)onproducts,(product_id, available_stock)oninventory). Indexed foreign key columns. - Query Rewriting: Replaced subqueries with

INNER JOINs where appropriate and ensuredWHEREclauses were selective and index-friendly. - Denormalization (Selective): For highly accessed product data (e.g.,

avg_rating,review_count), a few aggregated columns were added to theproductstable, updated asynchronously.

- Analyzed Execution Plans: Identified full table scans on large

- Result: Average search response times dropped to under 500 milliseconds. This translated to a 15% increase in conversion rates and a projected annual revenue increase of over $5 million due to improved user experience.

Case Study 2: Financial Reporting System Acceleration

A financial institution relied on daily batch reports generated from a large transaction database. These reports, crucial for regulatory compliance and business intelligence, were taking 8-10 hours to complete overnight, often delaying morning operations.

- Challenge: Processing billions of transaction records, complex aggregations (

SUM,AVG,COUNT) across multiple dimensions, and historical data analysis. - Solution:

- Data Partitioning: Implemented range partitioning on the

transaction_datecolumn of the maintransactionstable. This allowed queries for specific date ranges to only scan relevant partitions, not the entire table. - Materialized Views: Created materialized views (pre-computed summary tables) for common aggregations (e.g., daily totals by account type, monthly summaries by region). These views were refreshed incrementally or on a schedule, drastically speeding up report generation by avoiding real-time computation over raw data.

- Database Configuration Tuning: Increased

shared_buffersandwork_memto allow more data and sorting operations to occur in memory.

- Data Partitioning: Implemented range partitioning on the

- Result: Report generation time was reduced from 8-10 hours to less than 2 hours, ensuring reports were ready before the start of the trading day and reducing operational risk. The organization also realized a significant reduction in compute resource usage.

These examples illustrate that focused optimization efforts, combining indexing, query rewriting, and thoughtful schema/system configuration, can yield substantial benefits in terms of performance, cost savings, and business impact.

Pitfalls to Avoid and Common Misconceptions

While the pursuit of optimal database query performance for beginners is crucial, it's equally important to be aware of common pitfalls and misconceptions that can derail your efforts or even introduce new problems.

1. Over-Indexing: The "More is Better" Trap

Misconception: If one index is good, ten must be great!

Reality: Too many indexes can severely degrade write performance (INSERT, UPDATE, DELETE). Every time data changes in an indexed column, all associated indexes must also be updated. This overhead can become substantial on write-heavy tables. Additionally, indexes consume disk space and memory, and the query optimizer itself can struggle to choose the best plan when faced with too many choices, potentially leading to slower queries.

Guidance: Index strategically. Focus on columns used in WHERE, JOIN, and ORDER BY clauses of your most critical read queries. Regularly review index usage statistics to identify unused indexes that can be dropped.

2. Premature Optimization

Misconception: Optimize every query and table from day one. Reality: Optimizing before a problem exists is a waste of time and can lead to over-engineered solutions. It's often impossible to predict true bottlenecks without real data and real user loads. Guidance: Build your application with a sensible, normalized schema and well-written, clear SQL. Monitor performance, and when a specific bottleneck is identified (e.g., a query is consistently slow, an endpoint is timing out), then focus your optimization efforts there. The 80/20 rule often applies: 80% of performance issues come from 20% of the queries.

3. Ignoring Execution Plans

Misconception: I know my query is fast because it returns results quickly on my small development dataset. Reality: A query might run quickly on a few hundred or a few thousand rows, but completely collapse under millions or billions. Without checking the execution plan, you're guessing how the database is actually processing your request. Guidance: Always review the execution plan for your critical queries, especially when testing with representative data volumes. It's the only way to truly understand what's happening under the hood.

4. Relying Solely on ORMs for Performance

Misconception: My Object-Relational Mapper (ORM) (e.g., SQLAlchemy, Entity Framework, Hibernate) handles all optimization automatically.

Reality: While ORMs simplify database interactions, they can sometimes generate inefficient SQL, especially for complex queries. Over-reliance can lead to the "N+1 query problem" (fetching one parent record, then N child records with N separate queries) or fetching more data than necessary.

Guidance: Understand the SQL generated by your ORM. Use ORM features like eager loading (.include(), .join()) to fetch related data in a single query. Don't hesitate to drop down to raw SQL for performance-critical sections if the ORM isn't generating optimal queries.

5. Not Monitoring Database Performance

Misconception: Once it's fast, it stays fast. Reality: Database performance can degrade over time due to data growth, changes in access patterns, or application updates. Without monitoring, you won't know when problems start. Guidance: Implement continuous monitoring for key database metrics: CPU usage, memory usage, disk I/O, slow query logs, connection counts, and transaction rates. Use tools provided by your database system or third-party monitoring solutions. Early detection is key.

6. Misunderstanding Data Distribution

Misconception: An index on status will always speed up WHERE status = 'active'.

Reality: If a column has very low cardinality (e.g., a status column that is 'active' for 99% of rows), an index might not be used. The optimizer might determine that a full table scan is faster than scanning the index and then retrieving almost all rows from the table anyway.

Guidance: Be mindful of data distribution. Indexes are most effective on columns with high cardinality or when querying for a small subset of the data. Update statistics regularly to give the optimizer accurate information.

By being mindful of these pitfalls, beginners can navigate the optimization journey more effectively, avoiding common mistakes and building truly performant database systems.

The Future of Database Query Optimization

The landscape of database technology is continuously evolving, and so too are the approaches to query optimization. For those looking to stay ahead, understanding emerging trends is crucial.

-

AI and Machine Learning in Database Systems:

- The most significant trend is the integration of AI and ML into database systems for "self-tuning" or "autonomous" databases. These systems analyze query workloads, identify patterns, predict future performance issues, and automatically suggest or even implement optimizations (e.g., creating new indexes, adjusting buffer sizes, re-writing queries).

- Examples: Oracle's Autonomous Database, cloud-native databases leveraging AI for automatic scaling and performance tuning.

- Impact: Reduces the manual effort required for database administration and optimization, making high performance more accessible.

-

Cloud-Native and Serverless Databases:

- Databases designed for cloud environments (e.g., Amazon Aurora, Google Cloud Spanner, Azure Cosmos DB) offer elastic scalability and often embed optimization features. Serverless databases abstract away server management, automatically scaling resources up and down based on demand, which can dynamically adjust to query loads.

- Impact: Simplifies infrastructure management and provides built-in resilience and performance scaling.

-

New Indexing Techniques and Data Structures:

- Research continues into novel indexing methods beyond traditional B-trees, such as learned indexes (using machine learning models to predict data locations), space-partitioning indexes (for geospatial data), and specialized full-text search indexes.

- Impact: Enables faster queries for increasingly complex data types and access patterns.

-

Vector Databases and Hybrid Approaches:

- With the rise of AI and large language models (LLMs), vector databases (or vector capabilities in existing databases) are gaining prominence. These store data as high-dimensional vectors, enabling similarity searches (e.g., finding images similar to a given image, or text passages semantically related to a query).

- Impact: Expands the realm of database queries beyond traditional exact matches to encompass semantic and contextual searches, opening new optimization challenges and opportunities.

-

In-Memory and Hybrid Transaction/Analytical Processing (HTAP) Databases:

- In-memory databases (e.g., SAP HANA, Redis, VoltDB) store entire datasets in RAM, offering orders of magnitude faster performance by eliminating disk I/O. HTAP systems aim to run both transactional (OLTP) and analytical (OLAP) workloads efficiently on a single database, often leveraging in-memory columnar stores.

- Impact: Provides real-time analytics and ultra-low latency transactions, pushing the boundaries of what's possible with data.

These trends suggest a future where database optimization becomes increasingly automated, intelligent, and specialized. While the core principles discussed in this guide will remain relevant, the tools and technologies available to implement them will continue to evolve rapidly. Staying informed about these advancements will be key for any aspiring database professional.

Conclusion

Mastering the art of optimizing database query performance for beginners is an invaluable skill that significantly impacts application responsiveness, user experience, and overall system efficiency. We've journeyed through the fundamental stages of query execution, explored crucial strategies like intelligent indexing, effective SQL writing, and robust schema design, and touched upon advanced techniques such as execution plan analysis, caching, and database configuration tuning.

Remember, optimization is an iterative process, not a one-time fix. It requires a blend of understanding database internals, vigilant monitoring, and continuous learning. By applying the principles outlined here, you can transform sluggish queries into high-speed operations, ensuring your applications run smoothly and efficiently. Embrace these foundational concepts, avoid common pitfalls, and stay curious about the evolving landscape of database technology. Your efforts in optimizing database query performance will undoubtedly lay a strong groundwork for building scalable and successful data-driven solutions.

Frequently Asked Questions

Q: What is a database index and why is it important for query performance?

A: A database index is a data structure that speeds up data retrieval operations on a database table. It acts like a book's index, allowing the database system to quickly locate specific rows without scanning the entire table, drastically improving query speed for filtered or sorted data.

Q: How does the database query optimizer improve performance?

A: The query optimizer analyzes SQL statements and generates the most efficient execution plan for retrieving data. It considers table statistics, available indexes, and data volumes to choose a plan that minimizes I/O operations and CPU time, leading to faster query execution.

Q: What are the main pitfalls beginners should avoid when optimizing database queries?

A: Beginners should avoid over-indexing, premature optimization, and ignoring execution plans. Over-indexing can slow down write operations, optimizing without a clear bottleneck is inefficient, and not analyzing execution plans means you're guessing at performance issues.