Introduction: Conquering Complexity with Dynamic Programming

- Introduction: Conquering Complexity with Dynamic Programming

- What is Dynamic Programming (DP)?

- The "Aha!" Moment: How DP Works in Practice

- Why is Dynamic Programming Important?

- When to Use Dynamic Programming

- Tips for Mastering Dynamic Programming

- Conclusion: Embrace the Power of DP

- Further Reading & Resources

Imagine facing a seemingly impossible problem, one that has countless solutions or requires immense computational power. What if there was a strategic way to break it down, solve smaller parts, and then perfectly assemble them for the optimal grand solution? Welcome to the world of Dynamic Programming (DP).

Often considered a challenging topic in computer science, Dynamic Programming is, at its heart, an elegant method for solving complex problems by dividing them into simpler subproblems. It's not just a technique; it's a way of thinking that can dramatically improve the efficiency of your algorithms. If you've ever struggled with problems that seem to repeat calculations or grow exponentially in complexity, DP offers a powerful path to clarity and speed.

What is Dynamic Programming (DP)?

At its core, Dynamic Programming is an optimization technique used primarily for problems that exhibit two key characteristics:

- Overlapping Subproblems: The problem can be broken down into smaller subproblems that are solved multiple times. Instead of recomputing the same subproblem repeatedly, DP stores the results and reuses them.

- Optimal Substructure: An optimal solution to the overall problem can be constructed from optimal solutions to its subproblems. This means if you find the best solution for each small piece, you can combine them to get the best solution for the whole.

Think of it like building a complex LEGO castle. Instead of figuring out how to build the same window frame 20 times, you design it once, build it, and then replicate that perfect design wherever needed. DP essentially makes your algorithm "remember" what it has already figured out.

The "Aha!" Moment: How DP Works in Practice

Dynamic Programming primarily leverages two approaches to avoid redundant computations: Memoization (Top-Down) and Tabulation (Bottom-Up). Let's illustrate with the classic Fibonacci sequence: F(n) = F(n-1) + F(n-2) with F(0)=0, F(1)=1. A naive recursive solution recalculates F(k) many times.

Memoization (Top-Down Approach)

Memoization is a top-down approach where you solve the problem recursively, but store the results of expensive function calls (subproblems) in a cache (e.g., a dictionary or array). When the same inputs occur again, you simply return the cached result instead of recomputing.

# Python example for Fibonacci with Memoization

memo = {} # Dictionary to store computed results

def fib_memo(n):

# Base cases

if n in memo: # Check if result is already computed

return memo[n]

if n <= 1:

return n

# Compute and store the result

result = fib_memo(n-1) + fib_memo(n-2)

memo[n] = result

return result

print(f"Fibonacci(10) with Memoization: {fib_memo(10)}") # Output: 55

print(f"Fibonacci(50) with Memoization: {fib_memo(50)}") # Efficiently computes larger numbers

In fib_memo, before computing F(n), we check if it's already in memo. If yes, we instantly return the stored value, saving significant computation. This transforms an exponential time complexity into linear time.

Tabulation (Bottom-Up Approach)

Tabulation is a bottom-up approach where you solve all the smaller subproblems first, typically by filling up a table (array), and then use those results to build up the solution for larger subproblems until you reach the final answer. It avoids recursion by using iteration.

Using the Fibonacci example again:

# Python example for Fibonacci with Tabulation

def fib_tab(n):

if n <= 1:

return n

dp = [0] * (n + 1) # Create a DP table (array) to store results

dp[0] = 0

dp[1] = 1

# Fill the table from bottom up

for i in range(2, n + 1):

dp[i] = dp[i-1] + dp[i-2] # Build up from smaller, already computed solutions

return dp[n]

print(f"Fibonacci(10) with Tabulation: {fib_tab(10)}") # Output: 55

print(f"Fibonacci(50) with Tabulation: {fib_tab(50)}") # Efficiently computes larger numbers

Here, dp[i] stores the i-th Fibonacci number. We start by explicitly setting dp[0] and dp[1], then iteratively calculate dp[2] using dp[0] and dp[1], then dp[3] using dp[1] and dp[2], and so on, until we reach dp[n].

Why is Dynamic Programming Important?

DP's significance lies in its ability to transform exponentially complex problems into polynomial time, making previously intractable problems solvable within reasonable time frames. It's a cornerstone of competitive programming and a critical skill for any serious programmer looking to optimize algorithms.

Common applications of Dynamic Programming are found across various domains:

- Knapsack Problem: Maximizing value of items within a weight capacity.

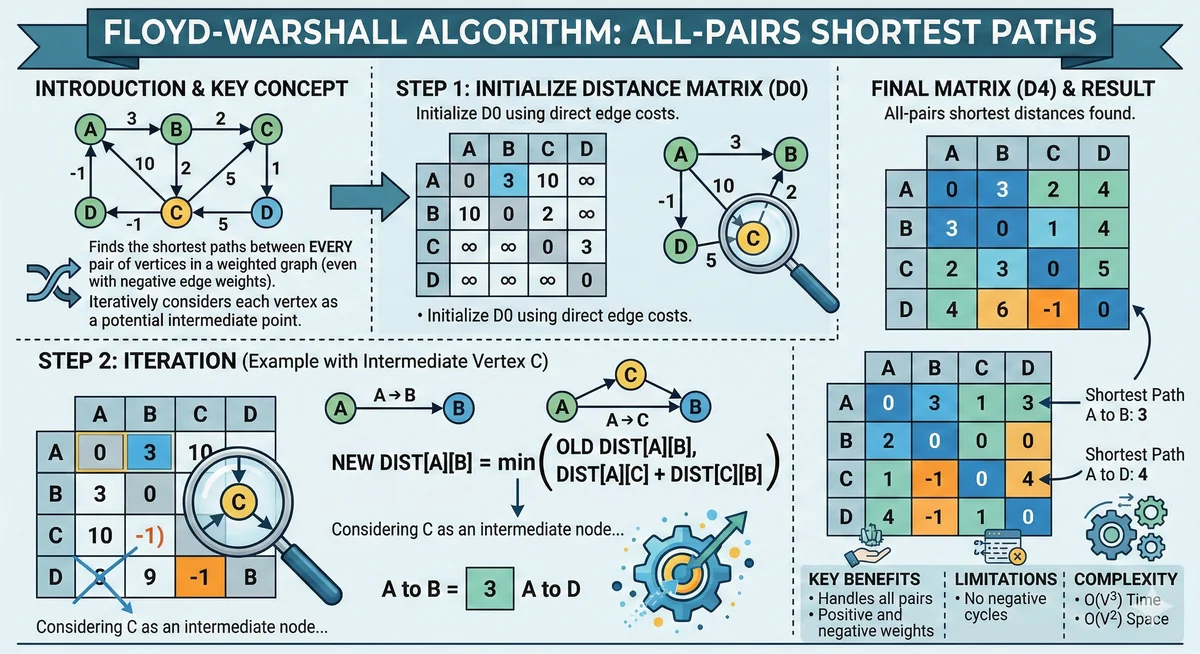

- Shortest Path Problems: In graph algorithms (e.g., Bellman-Ford, Floyd-Warshall).

- Longest Common Subsequence (LCS): Finding the longest sequence of characters common to two strings (used extensively in bioinformatics for DNA sequence alignment, and in version control systems).

- Coin Change Problem: Finding the minimum number of coins to make a given sum.

- Matrix Chain Multiplication: Optimizing the order of matrix multiplications to minimize operations.

When to Use Dynamic Programming

Before jumping into implementing a DP solution, always check if the problem exhibits the following characteristics:

- Recursive Structure: Can the problem be defined in terms of smaller instances of the same problem?

- Overlapping Subproblems: Does the naive recursive solution recompute the same subproblems many times? A recursion tree can help visualize this.

- Optimal Substructure: Can the optimal solution to the overall problem be constructed from optimal solutions of its subproblems?

If these conditions are met, DP is likely your best bet for an efficient solution!

Tips for Mastering Dynamic Programming

Dynamic Programming can feel like a riddle at first, but with a structured approach, it becomes much clearer:

- Understand Recursion First: DP is built upon recursive thinking. Master how to break a problem down recursively before optimizing.

- Identify Subproblems and Their State: This is often the trickiest part. What's the smallest, repeating unit of work? How can you parameterize it? (e.g.,

dp[i]for Fibonacci,dp[i][j]for LCS). - Draw Recursion Trees: Visualizing the overlapping subproblems and redundant calculations helps you understand where memoization or tabulation will be effective.

- Define the DP Relation (State Transition): Once you have your subproblems, how does the solution to a larger subproblem depend on the solutions of smaller ones? This is your

dp[i] = ...ordp[i][j] = ...equation. - Practice, Practice, Practice: Start with easy problems (Fibonacci, Coin Change, unique paths) and gradually move to harder ones (Longest Common Subsequence, Knapsack, Edit Distance). LeetCode, HackerRank, and other platforms offer ample problems.

- Don't Rush to Code: Spend ample time understanding the problem, identifying the subproblems, and defining the state transition before writing any code.

Conclusion: Embrace the Power of DP

Dynamic Programming might seem intimidating at first, but with a solid understanding of its core principles – overlapping subproblems and optimal substructure – and consistent practice, you'll unlock a powerful tool in your algorithmic arsenal. It's not just about passing coding interviews; it's about developing an elegant, efficient problem-solving mindset that will serve you throughout your programming career.

The ability to take a complex challenge, break it down, and solve it with optimal efficiency is a hallmark of an expert programmer. So, take the plunge! Start with a simple problem, draw that recursion tree, and witness the magic of Dynamic Programming for yourself. Your code (and CPU) will thank you.

Further Reading & Resources

- Dynamic Programming Overview - Wikipedia

- The Fibonacci Sequence - Wikipedia

- Knapsack Problem Explained - Wikipedia

- Longest Common Subsequence (LCS) - Wikipedia

- Memoization vs. Tabulation - GeeksforGeeks