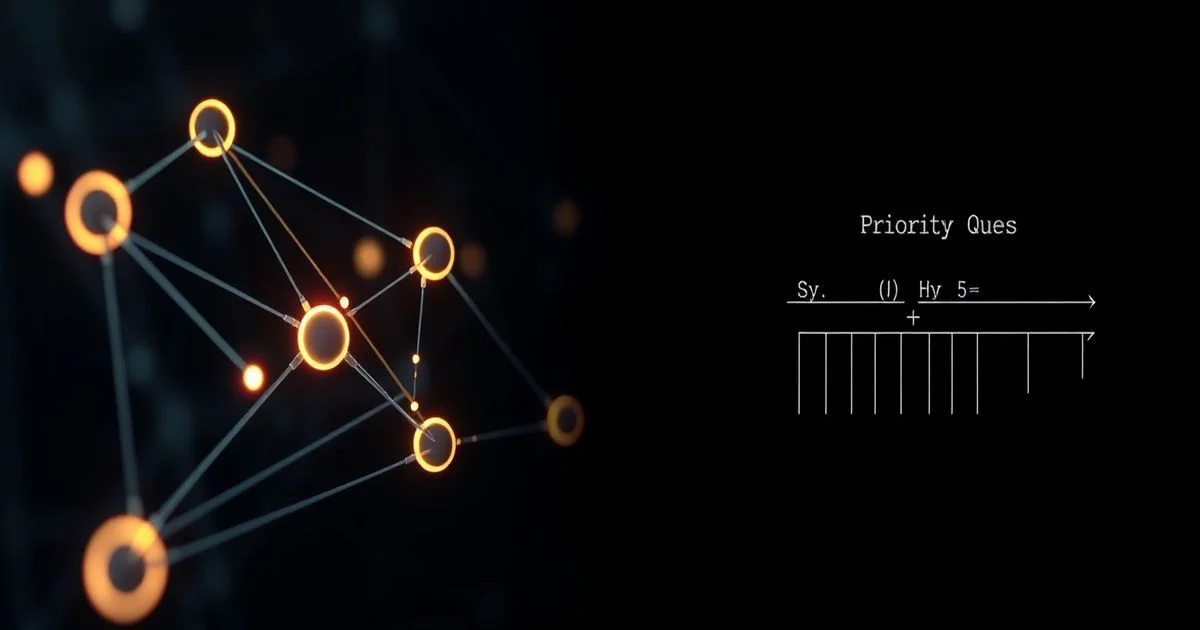

Implementing Heaps for Efficient Priority Queues: A Deep Dive

Mastering efficient data management is crucial in computer science, and a core aspect involves implementing heaps for efficient priority queues, a fundamental solution for managing items based on priority. Whether scheduling tasks, finding shortest paths, or simulating complex events, the ability to efficiently extract the "most important" element is paramount. This is precisely where the concept of a priority queue shines, and among its various implementations, implementing heaps for efficient priority queues stands out as the most practical and performance-driven solution. This article offers a deep dive into the underlying mechanics of heaps, their operational efficiency, and their pervasive utility across diverse computational challenges.

- What is a Priority Queue?

- Understanding Heaps: The Foundation

- Implementing Heaps for Efficient Priority Queues

- Heap-based Priority Queue Performance Analysis

- Real-World Applications and Use Cases

- Alternative Implementations and Why Heaps Excel

- Advanced Heap Variations and Optimizations

- Best Practices and Considerations

- Conclusion

- Frequently Asked Questions

- Further Reading & Resources

What is a Priority Queue?

At its core, a priority queue is an abstract data type akin to a regular queue or stack, but with a critical difference: elements are retrieved based on their priority. Unlike a First-In, First-Out (FIFO) queue or a Last-In, First-Out (LIFO) stack, a priority queue ensures that the element with the highest (or lowest) priority is always the first one to be dequeued, regardless of when it was enqueued.

Think of it as a waiting room where patients aren't called in the order they arrived, but rather based on the severity of their condition. A patient with a critical injury will be seen before someone with a minor ailment, even if the latter arrived earlier. In a computational context, "priority" can be anything from a numerical value (e.g., a cost in a shortest path algorithm, a timestamp in an event simulator) to an inherent property of the data itself.

The primary operations supported by a priority queue are:

- Enqueue (Insert): Adds a new element with an associated priority into the queue.

-

Dequeue (Extract-Min/Max): Removes and returns the element with the highest (or lowest) priority. This is the defining operation.

-

Peek (Find-Min/Max): Returns the element with the highest (or lowest) priority without removing it.

- IsEmpty: Checks if the priority queue contains any elements.

- Size: Returns the number of elements in the priority queue.

While simple arrays or linked lists can technically implement a priority queue, their performance for key operations like Enqueue or Dequeue can degrade significantly. For instance, maintaining a sorted array means Enqueue could take O(n) time as elements shift, or Dequeue takes O(n) to find the min/max if unsorted. This is where heaps provide a robust and efficient middle ground, offering logarithmic time complexity for these crucial operations.

Understanding Heaps: The Foundation

Before we delve into the specifics of implementing priority queues, it's essential to grasp the structure and properties of a heap. A heap is a specialized tree-based data structure that satisfies the "heap property" and the "shape property." It's typically implemented as an array, taking advantage of the binary tree structure to efficiently manage parent-child relationships.

Heap Properties:

-

Shape Property: A heap is always a complete binary tree. This means all levels of the tree are fully filled, except possibly the last level, which is filled from left to right. This property is crucial because it allows heaps to be efficiently represented using an array, eliminating the need for explicit pointers.

-

Heap Property: This property dictates the ordering of elements within the heap.

-

Min-Heap: For every node

iother than the root, the value ofiis greater than or equal to the value of its parentp. This ensures that the smallest element is always at the root. -

Max-Heap: For every node

iother than the root, the value ofiis less than or equal to the value of its parentp. This ensures that the largest element is always at the root.

-

Min-Heap vs. Max-Heap

The choice between a min-heap and a max-heap depends entirely on the application's requirement for priority.

-

Min-Heap: If your priority queue needs to always extract the smallest element (e.g., shortest path, closest event, lowest cost), a min-heap is the appropriate choice. The root of a min-heap always contains the minimum value.

-

Max-Heap: If your priority queue needs to always extract the largest element (e.g., highest priority task, largest value), a max-heap is appropriate. The root of a max-heap always contains the maximum value.

For the remainder of this article, unless specified otherwise, we will primarily discuss min-heaps, as they are more commonly used in algorithms like Dijkstra's or Prim's, which often seek minimal costs or distances. The concepts, however, are symmetric for max-heaps.

Heap Properties and Representation

The beauty of heaps lies in their array-based representation. Because a complete binary tree has a predictable structure, we don't need to store explicit left/right child pointers. Instead, the relationships can be calculated using simple arithmetic:

Array Representation of a Heap:

If we store the elements of a heap in an array A, where the root is at index 0:

- The parent of node

iis at index(i - 1) // 2(integer division). - The left child of node

iis at index2 * i + 1. - The right child of node

iis at index2 * i + 2.

This compact representation saves memory and allows for very fast access to parent and child nodes. For example, consider a min-heap stored in an array:

[10, 20, 30, 40, 50, 60, 70]

- Node at index 0 (value 10) is the root.

- Left child of 0 (20+1 = 1) is 20. Right child of 0 (20+2 = 2) is 30.

- Parent of 1 (20) is (1-1)//2 = 0, which is 10.

- All heap properties are maintained: 10 < 20, 10 < 30, 20 < 40, 20 < 50, etc.

The critical advantage of this representation is its efficiency. Operations involve minimal memory access and simple arithmetic, which translates to superior performance in most scenarios.

Implementing Heaps for Efficient Priority Queues

The core of a heap-based priority queue lies in two primary operations: insert (or enqueue) and extract-min (or dequeue). Both operations maintain the heap properties by "bubbling up" or "bubbling down" elements to their correct positions.

Core Operations: Insertion (Enqueue)

When a new element is inserted into a min-heap:

-

Place at the end: The new element is initially added to the next available position in the array (the end of the heap). This maintains the shape property.

-

Bubble Up (Heapify-Up): The newly inserted element might violate the heap property if its value is smaller than its parent's. To restore the heap property, the element is repeatedly compared with its parent. If it's smaller, it's swapped with its parent. This process continues upwards towards the root until the element is greater than or equal to its parent, or it reaches the root.

Example of Insertion (Min-Heap):

Let's insert 5 into the heap [10, 20, 30, 40, 50, 60, 70].

- Add

5to the end:[10, 20, 30, 40, 50, 60, 70, 5] -

5(at index 7) is compared with its parent70(at index(7-1)//2 = 3).5 < 70, so swap.[10, 20, 30, 5, 50, 60, 70, 40] -

5(at index 3) is compared with its parent30(at index(3-1)//2 = 1).5 < 30, so swap.[10, 5, 30, 20, 50, 60, 70, 40] -

5(at index 1) is compared with its parent10(at index(1-1)//2 = 0).5 < 10, so swap.[5, 10, 30, 20, 50, 60, 70, 40] -

5is now at the root (index 0), so the process stops. The heap property is restored.

The "bubble up" operation takes at most O(log n) time, as it traverses a path from a leaf to the root, and the height of a complete binary tree with n nodes is O(log n).

Core Operations: Extraction (Dequeue/Pop)

Extracting the minimum (or maximum) element from a heap is a bit more involved:

-

Store the root: The minimum element is always at the root of a min-heap. Store this value to return later.

-

Replace root with last element: To maintain the shape property, the element at the last position of the array is moved to the root. The last position is then removed (effectively decreasing the heap's size).

-

Bubble Down (Heapify-Down): The new root element might violate the heap property. To restore it, the element is repeatedly compared with its children. If it's larger than either child (in a min-heap), it's swapped with the smaller of its two children. This process continues downwards until the element is smaller than or equal to both its children, or it becomes a leaf node.

Example of Extraction (Min-Heap):

Let's extract the minimum from the heap [5, 10, 30, 20, 50, 60, 70, 40].

- Store root

5. - Replace root with

40(the last element) and remove40from its original position. The new heap (conceptually) is[40, 10, 30, 20, 50, 60, 70]. -

40(at index 0) is compared with its children10(index 1) and30(index 2).10is smaller than30.40 > 10, so swap40with10.[10, 40, 30, 20, 50, 60, 70]

-

40(at index 1) is compared with its children20(index2*1+1 = 3) and50(index2*1+2 = 4).20is smaller than50.40 > 20, so swap40with20.[10, 20, 30, 40, 50, 60, 70]

-

40(at index 3) is compared with its children (which would be at indices 7 and 8, but those are out of bounds for the current heap size).40is now a leaf node. The process stops. The heap property is restored. - Return the stored value

5.

The "bubble down" operation also takes at most O(log n) time, as it traverses a path from the root to a leaf, which is the height of the tree.

Core Operations: Peek/Find Min/Max

This operation is straightforward: simply return the value at the root of the heap (index 0 in the array representation). It does not modify the heap.

Time Complexity: O(1)

Core Operations: Decrease/Increase Key

These operations are crucial in algorithms like Dijkstra's, where the priority of an element already in the queue might change.

-

Decrease Key (Min-Heap): Find the element, update its priority to a smaller value, then perform a

bubble upoperation from that element's new position. This is because a decreased key might now be smaller than its parent, violating the heap property upwards. -

Increase Key (Min-Heap): Find the element, update its priority to a larger value, then perform a

bubble downoperation from that element's new position. This is because an increased key might now be larger than its children, violating the heap property downwards.

The challenge with these operations is efficiently finding the element whose priority needs to be changed. If the heap only supports value-based operations, finding an arbitrary element typically requires O(n) traversal. To achieve O(log n) for decrease-key (which is often required), the priority queue implementation usually needs to store additional metadata, such as a mapping from the actual item to its current index in the heap array. This is common in many advanced graph algorithms.

Heap-based Priority Queue Performance Analysis

The efficiency of implementing heaps for efficient priority queues is a major reason for their widespread adoption. Let's break down the time and space complexity of their fundamental operations.

Time Complexity

- Enqueue (Insertion): O(log n)

- Adding an element to the end of the array is O(1).

- The subsequent

bubble upoperation traverses at most the height of the tree, which is log n. Hence, the total time is O(log n).

- Dequeue (Extract-Min/Max): O(log n)

- Accessing the root element is O(1).

- Replacing the root with the last element and adjusting the array is O(1).

- The

bubble downoperation traverses at most the height of the tree, which is log n. Hence, the total time is O(log n).

- Peek (Find-Min/Max): O(1)

- Directly accessing the root element in the array takes constant time.

- Decrease/Increase Key: O(log n)

- Assuming the position of the element to be updated is known (e.g., through an auxiliary lookup table), updating its value is O(1).

- The subsequent

bubble uporbubble downoperation takes O(log n). Without knowing the element's position, finding it would be O(n).

Compared to alternative priority queue implementations:

-

Unsorted List/Array:

- Insertion is O(1).

Extract-Min/Maxis O(n) (requires scanning the whole list).- Why Heaps Excel: While insertion is faster, the O(n) for extraction quickly becomes a bottleneck for large datasets or frequent extractions. Heaps reduce this to O(log n), making them far more scalable.

-

Sorted List/Array:

- Insertion is O(n).

Extract-Min/Maxis O(1).- Why Heaps Excel: Good for scenarios with many extractions and few insertions. However, if insertions are frequent, the O(n) cost quickly outweighs the O(1) extraction benefit. Heaps balance both operations at O(log n).

-

Binary Search Tree (BST):

- Insertion is O(h), where

his the height of the tree. Extract-Min/Maxis O(h).- Why Heaps Excel: While a BST can also provide O(log n) average-case performance, its worst-case can be O(n). To guarantee O(log n), one must use a self-balancing BST (like an AVL tree or Red-Black tree), which are significantly more complex to implement a Binary Search Tree and maintain than a simple heap. Heaps provide guaranteed O(log n) performance for priority queue operations with simpler code and less overhead. Furthermore, for priority queue specific tasks (extract min/max), heaps are generally faster in practice due to better cache locality with array-based storage.

- Insertion is O(h), where

Heaps provide a consistent O(log n) performance for both insertion and extraction, striking an excellent balance that makes them ideal for a vast range of applications where frequent additions and removals are expected.

Space Complexity

- Space Complexity: O(n)

- A heap implemented using an array stores

nelements, requiring space proportional to the number of elements. - This is generally efficient as it uses contiguous memory and doesn't incur the overhead of storing explicit pointers for tree nodes, as would be the case for a linked tree structure.

- A heap implemented using an array stores

The efficient memory usage and predictable performance make heaps a cornerstone data structure for high-performance computing and algorithm design.

Real-World Applications and Use Cases

The practical utility of implementing heaps for efficient priority queues extends across numerous domains in computer science and engineering. Their ability to consistently provide the highest (or lowest) priority element makes them indispensable for optimization, scheduling, and graph traversal.

Shortest Path Algorithms (Dijkstra's, Prim's)

Perhaps one of the most famous applications, Dijkstra's algorithm for finding the shortest path from a single source to all other vertices in a weighted graph relies heavily on a min-priority queue. Each vertex is associated with a temporary distance (priority). The algorithm repeatedly extracts the unvisited vertex with the smallest tentative distance from the priority queue, relaxes its neighbors' distances, and updates their priorities in the queue. Without an efficient priority queue (like a heap), Dijkstra's complexity would be much higher.

Similarly, Prim's algorithm for finding a minimum spanning tree (MST) also uses a min-priority queue. It maintains a set of vertices already in the MST and a priority queue of edges connecting these vertices to unvisited ones, prioritizing edges with minimum weight.

Graph Representation:

Vertices: A, B, C, D, E

Edges: (A,B,4), (A,C,2), (B,E,3), (C,D,2), (D,E,3)

Source vertex: A

In Dijkstra's, the priority queue would store (distance, vertex), and we'd always extract the (min_distance, vertex).

Event Simulation

Discrete event simulation (DES) models the operation of a system as a discrete sequence of events in time. A priority queue is crucial for managing these events. Each event is placed into the priority queue with its scheduled occurrence time as its priority. The simulation engine then repeatedly extracts the event with the earliest time, executes it, and possibly generates new events that are then added back to the queue. This ensures that events are processed in chronological order. Examples include simulating queues in a bank, traffic flow, or network protocols.

Task Scheduling

Operating systems, especially real-time operating systems (RTOS), and job schedulers often use priority queues to manage processes or tasks. Each task is assigned a priority, and the scheduler extracts the highest-priority task ready for execution. This allows critical tasks to preempt less important ones, ensuring responsiveness and meeting deadlines. Similarly, cloud computing platforms might use priority queues to schedule virtual machine deployments or resource allocations based on user-defined priorities or service-level agreements.

Data Compression (Huffman Coding)

Huffman coding is a widely used algorithm for lossless data compression. It constructs a binary tree where frequently occurring characters have shorter binary codes. The construction of this Huffman tree involves a min-priority queue. Initially, each character is treated as a leaf node with its frequency as its priority. The algorithm repeatedly extracts the two nodes with the lowest frequencies from the priority queue, merges them into a new internal node whose frequency is the sum of its children's frequencies, and inserts this new node back into the priority queue. This process continues until only one node (the root of the Huffman tree) remains.

Operating Systems (Process Management)

Beyond task scheduling, operating systems also employ priority queues for managing various internal resources. For instance, managing I/O requests where certain processes or device operations have higher precedence, or in memory management where certain memory blocks might be prioritized for eviction or allocation. The core principle remains selecting the "best" candidate according to defined criteria.

These examples underscore the versatility and foundational importance of implementing heaps for efficient priority queues across diverse computational challenges, from fundamental algorithms to complex system architectures.

Alternative Implementations and Why Heaps Excel

While heaps offer an excellent balance of performance, it's insightful to consider alternative ways to implement a priority queue and understand why heaps are often preferred.

Unsorted Array/List

-

Insertion: O(1). Simply append the new element to the end of the array or list.

-

Extract-Min/Max: O(n). To find the minimum or maximum element, you must traverse the entire array/list. Once found, removing it can also take O(n) if elements need to be shifted.

-

Why Heaps Excel: While insertion is faster, the O(n) for extraction quickly becomes a bottleneck for large datasets or frequent extractions. Heaps reduce this to O(log n), making them far more scalable.

Sorted Array/List

-

Insertion: O(n). To maintain sorted order, a new element must be inserted at its correct position, potentially requiring shifting all subsequent elements.

-

Extract-Min/Max: O(1). The minimum/maximum element is always at one end of the sorted array/list, and removal is trivial.

-

Why Heaps Excel: Good for scenarios with many extractions and few insertions. However, if insertions are frequent, the O(n) cost quickly outweighs the O(1) extraction benefit. Heaps balance both operations at O(log n).

Binary Search Tree (BST)

-

Insertion: O(h), where

his the height of the tree. In the average case,h = O(log n), but in the worst case (e.g., inserting elements in sorted order),h = O(n). -

Extract-Min/Max: O(h). Finding the minimum (leftmost node) or maximum (rightmost node) and then deleting it takes time proportional to the height.

-

Why Heaps Excel: While a BST can also provide O(log n) average-case performance, its worst-case can be O(n). To guarantee O(log n), one must use a self-balancing BST (like an AVL tree or Red-Black tree), which are significantly more complex to implement a Binary Search Tree and maintain than a simple heap. Heaps provide guaranteed O(log n) performance for priority queue operations with simpler code and less overhead. Furthermore, for priority queue specific tasks (extract min/max), heaps are generally faster in practice due to better cache locality with array-based storage.

The key takeaway is that heaps offer a near-optimal balance for the core priority queue operations—insertion and extraction—both performing in logarithmic time. This makes them a superior choice for dynamic scenarios where elements are frequently added and removed, requiring consistent performance. The simplicity of their array-based implementation further enhances their appeal over more complex balanced tree structures for this specific problem.

Advanced Heap Variations and Optimizations

While the binary heap is the most common and practical choice for implementing priority queues, researchers have developed several advanced heap structures to optimize specific operations, particularly for very large datasets or specialized algorithms. These variations often offer better asymptotic performance for certain operations, though usually at the cost of increased complexity.

Fibonacci Heaps

Fibonacci heaps are a more sophisticated heap structure primarily used in theoretical computer science and for implementing graph algorithms on very dense graphs (where the number of edges is close to the square of the number of vertices). They offer improved amortized time complexity for some operations:

- Insertion: O(1) amortized

- Extract-Min: O(log n) amortized

- Decrease-Key: O(1) amortized

- Merge: O(1) amortized

The O(1) amortized decrease-key operation is particularly valuable for algorithms like Dijkstra's and Prim's on dense graphs, where many decrease-key operations occur. While theoretically superior, their high constant factors and implementation complexity mean they are rarely used in general-purpose programming contexts outside of specific research or high-performance library implementations.

Binomial Heaps

Binomial heaps are another collection of specialized heaps that support efficient merging of two heaps, a feature not natively efficient in binary heaps (which would require inserting all elements from one into another).

- Insertion: O(1) amortized

- Extract-Min: O(log n)

- Decrease-Key: O(log n)

- Merge: O(log n)

They are often implemented as a forest of binomial trees, where each tree satisfies the min-heap property. Binomial heaps find niches where frequent merging of priority queues is a dominant operation.

Pairing Heaps

Pairing heaps are a relatively simple-to-implement heap structure that often performs very well in practice, even though their worst-case theoretical bounds are not as strong as Fibonacci heaps. They are "pairing" heaps because they rely on pairwise comparisons and merging of sub-heaps.

- Insertion: O(1) amortized

- Extract-Min: O(log n) amortized (some analyses show O(log n) worst-case)

- Decrease-Key: O(log n) amortized (some analyses show O(1) amortized)

Despite some open questions regarding their precise theoretical bounds, pairing heaps are considered a good practical choice when decrease-key operations are frequent and a simpler implementation than Fibonacci heaps is desired. They strike a balance between theoretical elegance and practical efficiency.

For most general-purpose applications, the binary heap remains the go-to choice due to its simplicity, robust performance, and excellent cache locality. However, awareness of these advanced variants highlights the depth of research in optimizing priority queue performance for specialized computational challenges.

Best Practices and Considerations

When implementing or utilizing heap-based priority queues, a few best practices and considerations can optimize their use and ensure robust performance.

Language-Specific Implementations

Many programming languages offer built-in or standard library modules for heaps or priority queues, often implemented as binary heaps:

-

Python: The

heapqmodule provides an implementation of the heap queue algorithm. It treats a regular Python list as a heap, meaning it's not a separate data type but a set of functions that operate on lists to maintain heap properties. This makes it very flexible. -

Java: The

java.util.PriorityQueueclass implements a min-priority queue by default (a max-priority queue can be achieved with a customComparator). - C++: The

std::priority_queuein the Standard Template Library (STL) is a container adapter that provides a max-priority queue by default. It can be customized to be a min-priority queue using astd::greatercomparator.

Leveraging these well-tested and optimized built-in solutions is almost always preferable to writing a custom heap implementation from scratch, unless specific non-standard features or extreme performance tuning are required.

Handling Duplicate Priorities

Heaps inherently handle duplicate priorities without issue. If multiple elements have the same highest priority, any one of them can be extracted. The exact one depends on the stable ordering of the heapify operations and the specific implementation. If a strict tie-breaking rule is needed (e.g., FIFO among same-priority items), additional logic (like storing an insertion timestamp as a secondary priority) might be required.

Immutability of Priorities

For decrease-key or increase-key operations to be efficient (O(log n)), you typically need a way to quickly find an element's current position in the heap. If your elements are complex objects, you might need an auxiliary data structure (like a hash map) to map the object to its current index in the heap array. Modifying an element's priority without updating its position can violate the heap property and lead to incorrect behavior.

Fixed-Size Heaps (Top K Problems)

For problems requiring the "top K" smallest or largest elements, a fixed-size heap can be used. For the top K largest, maintain a min-heap of size K. Iterate through the input: if an element is larger than the heap's root, remove the root and insert the new element. If it's smaller, ignore it. This keeps the heap size to K, and its root will be the K-th largest element (and all elements in the heap will be the top K largest). This pattern is very common in competitive programming and data processing.

By understanding these practical considerations, developers can effectively utilize heap-based priority queues to solve a wide array of problems efficiently and robustly.

Conclusion

The journey into implementing heaps for efficient priority queues reveals a powerful and elegant solution to a ubiquitous problem in computer science. From the fundamental principles of the heap property and array-based representation to the detailed mechanics of insert and extract-min operations, we've seen how heaps consistently deliver logarithmic time complexity, a critical advantage over simpler data structures.

Their consistent O(log n) performance for core operations, coupled with efficient space utilization, makes them the de facto standard for priority queue implementations. This efficiency is not merely theoretical; it underpins the practical viability of crucial algorithms like Dijkstra's for shortest paths, Prim's for minimum spanning trees, and is essential for effective task scheduling, event simulation, and data compression techniques like Huffman coding.

While advanced heap variants offer specialized optimizations for particular scenarios, the binary heap remains the workhorse, balancing simplicity with high performance. By understanding and effectively leveraging heaps, developers gain a potent tool for optimizing algorithms, managing dynamic data, and building highly responsive and efficient systems. The mastery of this data structure is undoubtedly a valuable asset for any tech-savvy individual navigating the complexities of modern computing.

Frequently Asked Questions

Q: What is a heap?

A: A heap is a specialized tree-based data structure that satisfies two main properties: the heap property (defining the parent-child ordering) and the shape property (being a complete binary tree). It's typically implemented using an array, which allows for efficient calculation of parent and child indices.

Q: Why are heaps efficient for priority queues?

A: Heaps are efficient for priority queues because they offer a consistent O(log n) time complexity for both inserting new elements and extracting the highest (or lowest) priority element. This logarithmic performance significantly outperforms simpler data structures like unsorted or sorted arrays for dynamic scenarios with frequent additions and removals.

Q: What is a priority queue used for?

A: Priority queues have widespread applications, including implementing graph algorithms like Dijkstra's for shortest paths and Prim's for minimum spanning trees, managing tasks and processes in operating systems, scheduling events in simulations, and in data compression techniques such as Huffman coding.