How to Handle Database Normalization: A Practical Guide

Database management is the backbone of almost every modern application, and at its core lies the crucial concept of database normalization. For any tech professional involved in data architecture or development, understanding how to handle database normalization: a practical guide is not just beneficial, but essential. This comprehensive guide will walk you through the intricacies of structuring your databases efficiently, reducing data redundancy, and enhancing data integrity, ensuring your systems are both robust and scalable.

- What Is Database Normalization? The Cornerstone of Data Integrity

- The Normal Forms: A Deep Dive into Structured Data

- Denormalization: When to Break the Rules

- Normalization vs. Denormalization: Finding the Balance

- Practical Strategies for Implementing Normalization

- Common Pitfalls in Database Normalization and How to Avoid Them

- The Impact of Normalization on Database Performance and Scalability

- Frequently Asked Questions

- Further Reading & Resources

What Is Database Normalization? The Cornerstone of Data Integrity

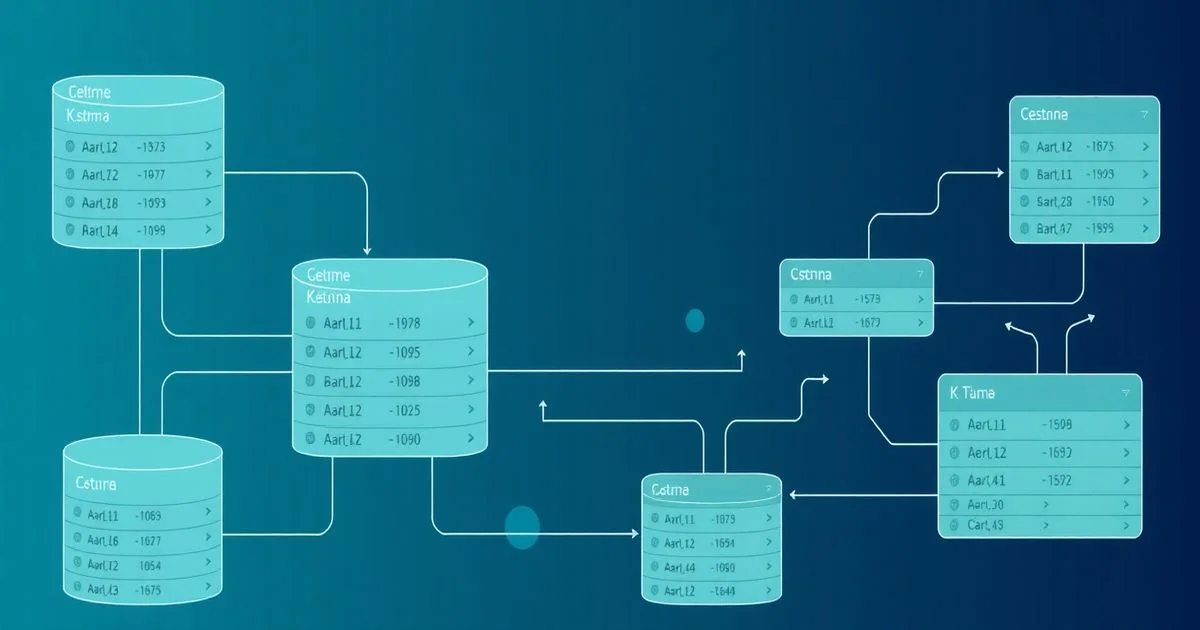

Database normalization is a systematic approach to organizing the fields and tables of a relational database. Its primary goals are to reduce data redundancy (storing the same piece of information multiple times) and improve data integrity (ensuring data is accurate and consistent across the database). Imagine a library where every book record included the author's full biography each time one of their books was listed. This would be incredibly redundant and make updates a nightmare. Normalization solves this by creating a separate 'Author' table, linking to it from the 'Books' table.

This process involves breaking down a large table into smaller, more manageable tables and defining relationships between them. These relationships are typically established using primary and foreign keys. By adhering to a set of rules known as "normal forms," you can minimize anomalies (update, insertion, and deletion anomalies) that can arise from poorly structured databases. It’s about building a solid, logical foundation for your data, much like an architect carefully plans the layout of a building before construction begins.

The foundational idea is to ensure that each piece of information is stored in only one place. This makes the database more efficient, easier to maintain, and less prone to errors. For instance, if an author changes their name, you'd only need to update it in one central 'Authors' table, rather than sifting through potentially hundreds or thousands of 'Books' records. This principle is vital for any application that relies on consistent and reliable data.

The Normal Forms: A Deep Dive into Structured Data

Database normalization is achieved by progressing through a series of "normal forms," each imposing stricter rules to eliminate specific types of data redundancy and inconsistency. While there are six widely recognized normal forms (1NF, 2NF, 3NF, BCNF, 4NF, 5NF), the first three, along with Boyce-Codd Normal Form (BCNF), are the most commonly applied in practical database design. Understanding each step is crucial to effectively handle database normalization: a practical guide to robust systems.

First Normal Form (1NF)

1NF is the most basic level of normalization and sets the fundamental rules for structuring a table. A table is in 1NF if it satisfies two main conditions:

- Atomic Values: Each column must contain atomic (indivisible) values. This means you shouldn't have multiple values stored in a single cell. For example, a "Phone Numbers" column should not contain "123-4567, 987-6543". Instead, each phone number should be in its own row or column.

- No Repeating Groups: There should be no repeating groups of columns. For instance, instead of

Phone1,Phone2,Phone3columns, each phone number should be in a separate row, or in a separate related table.

Why it matters: 1NF ensures that each row-column intersection contains only one value, making the data easier to query, manipulate, and manage. It eliminates the ambiguity of multi-valued attributes and sets the stage for further normalization. Without 1NF, you can't even meaningfully define a primary key, as rows wouldn't be uniquely identifiable.

Example: Before 1NF

Consider a Students table that stores student information and their enrolled courses:

StudentID | StudentName | CoursesEnrolled

-----------------------------------------

1 | Alice | Math, Physics

2 | Bob | Chemistry

3 | Charlie | History, English, Art

Here, the CoursesEnrolled column contains multiple values, violating the atomic values rule. It also implies a repeating group if we were to model it with Course1, Course2, etc.

Example: After 1NF

To bring this table into 1NF, we would separate the courses into individual rows:

StudentID | StudentName | CourseName

------------------------------------

1 | Alice | Math

1 | Alice | Physics

2 | Bob | Chemistry

3 | Charlie | History

3 | Charlie | English

3 | Charlie | Art

Now, each row contains a single, atomic course name. The combination of StudentID and CourseName can serve as a composite primary key, uniquely identifying each enrollment. While this introduces some redundancy in StudentName, this will be addressed in subsequent normal forms.

Second Normal Form (2NF)

A table is in 2NF if it meets the requirements of 1NF AND all non-key attributes are fully functionally dependent on the primary key. This rule applies specifically to tables with a composite primary key (a primary key made up of two or more columns).

Explanation of Functional Dependency:

An attribute B is functionally dependent on attribute A if, for every valid instance of A, that value of A uniquely determines the value of B. We write this as A -> B.

Explanation of Partial Dependency:

A partial dependency occurs when a non-key attribute is dependent on only part of a composite primary key. If (A, B) is a composite primary key and C is a non-key attribute, then (A, B) -> C is a full functional dependency. However, if A -> C (meaning C depends only on A, a part of the primary key), then it's a partial dependency.

Why it matters: Eliminating partial dependencies reduces redundancy and the risk of update anomalies. If a non-key attribute depends only on part of the primary key, it suggests that information about that part of the key is being repeated for every instance of the full key.

Example: Before 2NF

Using the 1NF Students table from before, let's add InstructorName for each course:

StudentID | StudentName | CourseName | InstructorName | CourseCredits

--------------------------------------------------------------------

1 | Alice | Math | Mr. Smith | 3

1 | Alice | Physics | Ms. Johnson | 4

2 | Bob | Chemistry | Dr. Davis | 3

3 | Charlie | History | Dr. White | 3

3 | Charlie | English | Ms. Miller | 3

3 | Charlie | Art | Mr. Brown | 2

Here, the composite primary key is (StudentID, CourseName).

CourseCreditsdepends only onCourseName(part of the primary key), not onStudentID. This is a partial dependency:CourseName -> CourseCredits.StudentNamedepends only onStudentID(part of the primary key), not onCourseName. This is also a partial dependency:StudentID -> StudentName.InstructorNamedepends only onCourseName. This is a partial dependency.

Example: After 2NF

To achieve 2NF, we need to decompose the table into multiple tables, removing the partial dependencies.

Students Table:

StudentID | StudentName

-----------------------

1 | Alice

2 | Bob

3 | Charlie

(Here, StudentName is fully dependent on StudentID, which is its primary key)

Courses Table:

CourseName | InstructorName | CourseCredits

-------------------------------------------

Math | Mr. Smith | 3

Physics | Ms. Johnson | 4

Chemistry | Dr. Davis | 3

History | Dr. White | 3

English | Ms. Miller | 3

Art | Mr. Brown | 2

(Here, InstructorName and CourseCredits are fully dependent on CourseName, which is its primary key)

Enrollments Table (Junction Table):

StudentID | CourseName

----------------------

1 | Math

1 | Physics

2 | Chemistry

3 | History

3 | English

3 | Art

(The primary key (StudentID, CourseName) ensures all attributes (none, in this case) are fully dependent)

Now, all non-key attributes in each table are fully dependent on their respective primary keys. If Alice changes her name, it's updated only in the Students table. If the credits for Math change, it's updated only in the Courses table.

Third Normal Form (3NF)

A table is in 3NF if it is in 2NF AND there are no transitive dependencies of non-key attributes on the primary key. A transitive dependency occurs when a non-key attribute is indirectly dependent on the primary key through another non-key attribute.

Explanation of Transitive Dependency:

If A -> B and B -> C, then A -> C is a transitive dependency. In the context of 3NF, this means a non-key attribute C is dependent on another non-key attribute B, which in turn is dependent on the primary key A. So, A -> B and B -> C implies A -> C (transitive).

Why it matters: Eliminating transitive dependencies further reduces data redundancy and prevents update anomalies. Storing information that can be derived from other non-key attributes within the same table leads to inconsistent data if not managed carefully.

Example: Before 3NF

Let's refine our Courses table from the 2NF example by adding DepartmentName and DepartmentHead for each course. Assume each course belongs to a department, and each department has a single head.

CourseName | InstructorName | CourseCredits | DepartmentName | DepartmentHead

---------------------------------------------------------------------------

Math | Mr. Smith | 3 | Mathematics | Dr. Euler

Physics | Ms. Johnson | 4 | Physics | Dr. Curie

Chemistry | Dr. Davis | 3 | Chemistry | Dr. Lavoisier

History | Dr. White | 3 | Humanities | Dr. Hobbes

English | Ms. Miller | 3 | Humanities | Dr. Hobbes

Art | Mr. Brown | 2 | Arts | Dr. Monet

The primary key is CourseName.

CourseName -> DepartmentName(A course determines its department).DepartmentName -> DepartmentHead(A department determines its head).- Therefore,

CourseName -> DepartmentHeadis a transitive dependency throughDepartmentName.DepartmentHeadis a non-key attribute that depends on another non-key attribute (DepartmentName), which in turn depends on the primary key (CourseName).

Example: After 3NF

To bring this into 3NF, we extract the transitive dependency into a new table:

Courses Table:

CourseName | InstructorName | CourseCredits | DepartmentName

------------------------------------------------------------

Math | Mr. Smith | 3 | Mathematics

Physics | Ms. Johnson | 4 | Physics

Chemistry | Dr. Davis | 3 | Chemistry

History | Dr. White | 3 | Humanities

English | Ms. Miller | 3 | Humanities

Art | Mr. Brown | 2 | Arts

Departments Table:

DepartmentName | DepartmentHead

--------------------------------

Mathematics | Dr. Euler

Physics | Dr. Curie

Chemistry | Dr. Lavoisier

Humanities | Dr. Hobbes

Arts | Dr. Monet

Now, the Courses table has no transitive dependencies. InstructorName, CourseCredits, and DepartmentName are directly dependent on CourseName. DepartmentHead is directly dependent on DepartmentName in the Departments table. This structure is more efficient, as DepartmentHead information is stored only once per department, regardless of how many courses that department offers.

Boyce-Codd Normal Form (BCNF)

BCNF is a stricter version of 3NF. A table is in BCNF if it is in 3NF AND every determinant is a candidate key.

Explanation of Determinant:

A determinant is any attribute or set of attributes that determines another attribute. If A -> B, then A is a determinant. In 3NF, if A is a primary key and A -> B, that's fine. The problem arises in BCNF when a non-key attribute determines part of the primary key, or when multiple candidate keys exist.

Why it matters: BCNF addresses certain types of anomalies that 3NF might miss, particularly in tables with overlapping candidate keys or where a non-key attribute determines a key attribute. It ensures maximum data integrity by eliminating all functional dependencies where a determinant is not a candidate key.

Example: Before BCNF (and after 3NF)

Consider a Students_Advisors_Subjects table where:

StudentIDuniquely identifies a student.AdvisorIDuniquely identifies an advisor.- A student can have multiple advisors for different subjects.

- An advisor can advise multiple students in different subjects.

- Each

Student-Advisorpair is associated with exactly oneSubject. - An

Advisoris expert in only oneSubject.

This implies the following dependencies:

(StudentID, AdvisorID) -> Subject(A student-advisor pair determines a subject)AdvisorID -> Subject(An advisor is expert in one subject, so AdvisorID determines Subject)

Let (StudentID, AdvisorID) be the composite primary key.

StudentID | AdvisorID | Subject

--------------------------------

101 | A01 | Database

101 | A02 | Networking

102 | A01 | Database

103 | A03 | Operating Systems

This table is in 3NF because there are no partial dependencies (non-key attribute Subject depends on the full key (StudentID, AdvisorID)), and no transitive dependencies (no non-key attribute determines another non-key attribute).

However, it's not in BCNF because AdvisorID is a determinant (AdvisorID -> Subject), but AdvisorID is not a candidate key for the entire table. AdvisorID does not uniquely identify a row in the original table because multiple students can have the same advisor (e.g., A01 advises 101 and 102). This means that Subject is repeated for each student an AdvisorID advises.

Example: After BCNF

To achieve BCNF, we decompose the table:

Student_Advisors Table:

StudentID | AdvisorID

---------------------

101 | A01

101 | A02

102 | A01

103 | A03

(Primary key: (StudentID, AdvisorID). No other determinants. This table is now in BCNF.)

Advisor_Subjects Table:

AdvisorID | Subject

--------------------

A01 | Database

A02 | Networking

A03 | Operating Systems

(Primary key: AdvisorID. AdvisorID is a determinant, and it is a candidate key. This table is now in BCNF.)

This decomposition eliminates the redundancy of Subject being repeated for AdvisorID A01. If Advisor A01's subject changes from Database to Data Warehousing, it's updated in only one place.

Fourth Normal Form (4NF)

A table is in 4NF if it is in BCNF AND does not contain any multi-valued dependencies. Multi-valued dependencies occur when, for a dependency A ->-> B, for each value of A, there is a well-defined set of values for B that is independent of any other attributes.

Explanation of Multi-valued Dependency (MVD):

An MVD A ->-> B exists if for each A there is a set of B values, and this set of B values is independent of other non-key attributes C. This often arises when a table attempts to represent two or more independent one-to-many relationships from the same key.

Why it matters: 4NF addresses scenarios where a table records multiple independent multi-valued facts about an entity. Without 4NF, these independent facts can interact in undesirable ways, leading to redundancy and anomalies, especially during insertions and deletions.

Example: Before 4NF

Consider a Course_Instructor_Textbook table:

CourseID | Instructor | Textbook

--------------------------------

CS101 | Smith | Data Structures Book 1

CS101 | Smith | Algorithms Book 1

CS101 | Jones | Data Structures Book 1

CS101 | Jones | Algorithms Book 1

Here, CourseID determines a set of instructors and a set of textbooks. These sets are independent.

CS101has instructors {Smith, Jones}CS101has textbooks {Data Structures Book 1, Algorithms Book 1}

This implies two MVDs: CourseID ->-> Instructor and CourseID ->-> Textbook.

The issue is that if CS101 gets a new instructor, say Miller, we would have to add rows for (CS101, Miller, Data Structures Book 1) and (CS101, Miller, Algorithms Book 1). If CS101 gets a new textbook, say Book 3, we add rows for (CS101, Smith, Book 3) and (CS101, Jones, Book 3). This redundancy is due to the independent multi-valued facts.

Example: After 4NF

To achieve 4NF, we decompose the table into two separate tables:

Course_Instructors Table:

CourseID | Instructor

---------------------

CS101 | Smith

CS101 | Jones

Course_Textbooks Table:

CourseID | Textbook

---------------------

CS101 | Data Structures Book 1

CS101 | Algorithms Book 1

Each new table now represents a single multi-valued dependency, eliminating the redundancy and insertion/deletion anomalies caused by independent multi-valued facts sharing a single key.

Fifth Normal Form (5NF)

Also known as Project-Join Normal Form (PJNF), 5NF is the highest level of normalization. A table is in 5NF if it is in 4NF AND does not contain any join dependencies. A join dependency implies that a table can be decomposed into smaller tables, and when these smaller tables are joined back together, they produce the original table without spurious tuples (extra, incorrect rows). This typically occurs when a single table represents three or more interdependent multi-valued facts.

Why it matters: 5NF eliminates any remaining redundancy that might exist when a table describes relationships between three or more attributes that are not directly represented by 4NF. It ensures that data cannot be reconstructed incorrectly if the table is projected and rejoined in certain ways.

Example:

5NF is extremely rare in practical applications and hard to illustrate without complex business rules. It often deals with "many-to-many-to-many" relationships where three or more entities participate in a single, complex relationship, and the relationship cannot be decomposed without loss of information (meaning, without introducing incorrect combinations). A common example involves suppliers, parts, and projects, where a supplier may supply certain parts to certain projects, and this relationship cannot be fully captured by pairs of relationships. Most practical designs stop at BCNF or 3NF due to the complexity and diminishing returns.

Denormalization: When to Break the Rules

While normalization is crucial for data integrity and reducing redundancy, it's not always the optimal solution for every database design problem. Denormalization is the intentional introduction of redundancy into a database, often by combining tables or adding duplicate data, in order to improve query performance.

Why Denormalize?

Normalized databases, by their nature, spread data across many tables. Retrieving comprehensive data often requires joining multiple tables. For applications with high read volumes, complex analytical queries (OLAP systems), or where response time is critical, performing numerous joins can be computationally expensive and slow. Denormalization reduces the number of joins required, thereby speeding up data retrieval.

Common Scenarios for Denormalization:

- Reporting and Data Warehousing (OLAP): These systems prioritize fast data retrieval for analytical queries over the atomicity of data. Redundant data (e.g., storing customer names in order tables) can eliminate expensive joins.

- Performance Optimization: When specific queries are bottlenecks, denormalizing small, frequently accessed lookup tables (like

CountryorProductCategory) into larger transaction tables can significantly improve performance. - Aggregated Data: Storing pre-calculated aggregates (e.g.,

total_salesfor a month) directly in a table, rather than calculating it on the fly from detailed transaction records, can dramatically speed up reporting. - User Interface Needs: Sometimes, a UI requires a combination of data that is naturally spread across multiple normalized tables. Denormalizing for a specific view can simplify the query for that view.

Drawbacks of Denormalization:

The primary trade-off is the reintroduction of redundancy, which brings back the risk of update, insertion, and deletion anomalies. Maintaining data consistency becomes more challenging and requires careful application logic or triggers to ensure that redundant data is kept synchronized. It also increases storage requirements.

Normalization vs. Denormalization: Finding the Balance

The decision to normalize or denormalize is a critical one in database design, requiring a careful balance between data integrity and performance. There's no one-size-fits-all answer; the optimal approach depends heavily on the specific application's requirements, workload characteristics, and future scalability needs.

When to Prioritize Normalization:

- Online Transaction Processing (OLTP) Systems: Systems characterized by frequent insertions, updates, and deletions (e.g., banking systems, e-commerce checkout) benefit immensely from normalization. It minimizes update anomalies, ensures data consistency, and reduces storage space for frequently modified data.

- High Data Integrity Requirements: When accuracy and consistency of data are paramount, normalization is the preferred choice. It reduces the chances of errors caused by redundant data that gets updated inconsistently.

- Evolving Data Models: Normalized schemas are generally more flexible and easier to extend or modify when business requirements change, as changes typically affect fewer tables.

When to Consider Denormalization:

- Read-Heavy Workloads (OLAP/Reporting): For data warehouses, business intelligence dashboards, or any application primarily focused on reading and analyzing large volumes of data, denormalization can provide significant performance gains.

- Complex Queries: If your application frequently executes queries that involve joining many tables, and these queries are impacting performance, selective denormalization might be beneficial.

- Specific Performance Bottlenecks: When profiling reveals that certain queries are unacceptably slow due to excessive joins, selective denormalization might be beneficial for optimizing SQL queries for peak performance. Always measure the performance impact.

- Known Fixed Reporting Structures: If reports are well-defined and unlikely to change, denormalizing to match the report structure can optimize retrieval.

The Hybrid Approach:

Many real-world systems adopt a hybrid approach. They typically start with a highly normalized design to ensure data integrity, especially for transactional data. Then, for specific performance-critical areas, reporting modules, or data warehousing purposes, they might introduce controlled denormalization. This could involve:

- Materialized Views: Pre-computed tables that store the result of a complex query. These views are periodically refreshed to reflect changes in the underlying normalized tables.

- Summary Tables: Tables specifically designed to store aggregated data (e.g., daily sales totals) rather than individual transactions.

- Duplicating Lookup Data: Copying static, frequently accessed reference data (like product names or category descriptions) into transaction tables.

The key is to make informed decisions, backed by profiling and testing, rather than blindly applying one principle over the other. Understanding the trade-offs is essential for designing a database that is both robust and performant.

Practical Strategies for Implementing Normalization

Implementing normalization isn't just about knowing the rules; it's about applying them effectively throughout the database lifecycle. Here are practical strategies to handle database normalization: a practical guide for your projects.

-

Start with a Normalized Design:

- Default to Normalization: Begin your database design with at least 3NF or BCNF. This establishes a strong foundation for data integrity. It's generally easier to denormalize later if performance issues arise than to normalize a poorly structured database after the fact.

- Data Modeling Tools: Utilize Entity-Relationship (ER) diagramming tools (e.g., Lucidchart, dbdiagram.io, draw.io) to visually represent your entities, attributes, and relationships. These tools help identify potential violations of normal forms early in the design process.

-

Identify Functional Dependencies:

- Understand Your Data: Before designing tables, thoroughly understand the data and the business rules governing it. This is the most crucial step for identifying functional dependencies. Ask questions like: "What uniquely identifies a customer?", "Does an order item depend on the whole order or just a product?", "Is any attribute determined by another non-key attribute?"

- Data Dictionary: Create a detailed data dictionary that defines each attribute, its domain, and its dependencies. This documentation is invaluable for both initial design and future maintenance.

-

Iterative Refinement:

- Start Simple, Refine Gradually: You don't have to jump straight to BCNF. Start by ensuring 1NF, then move to 2NF, and then 3NF. This iterative process helps in understanding the impact of each step.

- Review and Validate: Regularly review your schema with stakeholders and other developers. Peer review can catch normalization violations that you might have missed.

-

Use Surrogate Keys Judiciously:

- Simplify Primary Keys: For tables with naturally occurring composite primary keys that are long or complex, consider introducing a simple, auto-incrementing integer (surrogate key) as the primary key. While the natural key still maintains its unique constraint, the surrogate key simplifies foreign key relationships and indexing.

- Maintain Natural Key Uniqueness: Even with a surrogate key, ensure that the original candidate key (natural key) maintains its unique constraint to prevent duplicate logical entities.

-

Documentation is Key:

- Schema Documentation: Document your database schema, including tables, columns, data types, primary keys, foreign keys, indexes, and especially the rationale behind your normalization choices (or denormalization).

- Dependency Mapping: Explicitly document the functional dependencies you identified. This helps future developers understand the data relationships and avoid introducing normalization violations.

-

Performance Monitoring and Tuning:

- Profile Your Queries: After initial deployment, monitor your database performance. Identify slow queries, especially those involving many joins.

- Consider Denormalization: If specific, high-priority queries are consistently slow due to over-normalization, strategically apply denormalization to those specific areas. This might involve creating materialized views or summary tables. Always measure the performance impact of denormalization changes.

- Indexing: Proper indexing can mitigate some of the performance overhead of normalized databases by speeding up joins and lookups without resorting to denormalization.

By following these practical strategies, you can build a well-normalized database that is resilient, consistent, and adaptable to changing business needs, while also being mindful of performance considerations.

Common Pitfalls in Database Normalization and How to Avoid Them

While normalization is a powerful tool, misapplication or misunderstanding can lead to its own set of problems. Being aware of common pitfalls is key to effectively implementing database design principles.

-

Over-Normalization:

- The Pitfall: Striving for 5NF or even 4NF for every table in an OLTP system can lead to an excessive number of tables and joins. This can severely degrade query performance, making simple data retrieval cumbersome and resource-intensive.

- Avoidance: Understand the practical sweet spot. For most transactional systems, 3NF or BCNF is sufficient. Only move to higher normal forms if specific, documented anomalies or data integrity issues necessitate it, particularly for independent multi-valued facts. Always weigh the benefits of higher normalization against the potential performance overhead.

-

Ignoring Performance Implications:

- The Pitfall: A perfectly normalized database isn't necessarily a performant one. More joins mean more I/O operations and CPU cycles. If a critical business report needs to join 10 tables every time it runs, and it runs hundreds of times an hour, performance will suffer.

- Avoidance: Design for both integrity and performance from the outset. Profile your queries, identify bottlenecks, and be prepared to strategically denormalize when necessary. Use indexing effectively to speed up joins. Consider a separate data warehousing solution (often denormalized) for analytical reporting.

-

Lack of Understanding of Functional Dependencies:

- The Pitfall: Normalization hinges on correctly identifying functional dependencies. Misidentifying them can lead to a schema that appears normalized but still harbors anomalies, or conversely, creates unnecessary complexity.

- Avoidance: Invest time in thoroughly analyzing your data and business rules. Document all identified functional dependencies. Engage with domain experts to validate your understanding of how data attributes relate to each other.

-

Premature Optimization (Denormalization):

- The Pitfall: Denormalizing tables "just in case" performance becomes an issue, without concrete evidence from profiling, is a common mistake. This reintroduces redundancy and complicates data maintenance unnecessarily.

- Avoidance: Normalize first. Only denormalize when a performance bottleneck is clearly identified and proven to be caused by normalization-induced joins. Measure before and after to confirm the improvement. Denormalization should be a targeted, evidence-based decision, not a default strategy.

-

Inadequate Use of Keys and Constraints:

- The Pitfall: A normalized schema relies heavily on primary keys, foreign keys, and unique constraints to enforce relationships and data integrity. Failing to define these properly undermines the benefits of normalization.

- Avoidance: Always define primary keys for every table. Establish foreign key relationships to link related tables and enforce referential integrity. Use unique constraints where appropriate to prevent duplicate entries for candidate keys.

By being mindful of these pitfalls, database designers can craft robust, efficient, and maintainable systems that strike the right balance between theoretical purity and practical performance.

The Impact of Normalization on Database Performance and Scalability

Database normalization fundamentally influences how a system performs and scales. While often seen as a best practice for data integrity, its effects on operational aspects are multi-faceted.

Benefits for Performance and Scalability:

-

Reduced Data Redundancy: This is the hallmark of normalization. Less redundant data means:

- Smaller Database Size: Fewer disk reads and writes, potentially faster backups and restores.

- Improved Write Performance: Updates, insertions, and deletions are generally faster because changes need to be applied in fewer places. This is crucial for OLTP systems.

- Reduced Storage Costs: Particularly relevant in cloud environments where storage is billed.

-

Enhanced Data Integrity:

- Fewer Anomalies: Update, insertion, and deletion anomalies are minimized, leading to more reliable and consistent data. This is not directly a performance benefit but prevents costly data corruption that can severely impact system functionality and trust.

- Easier Maintenance: With data stored logically and without redundancy, the database is simpler to maintain and less prone to errors when schema changes or data migrations occur.

-

Increased Concurrency:

- By breaking down large tables into smaller, more focused ones, database operations often lock smaller portions of the database. This allows more concurrent users or processes to access and modify different parts of the data simultaneously, improving overall system throughput.

-

Better Data Management and Query Optimization:

- A well-normalized schema provides a clearer, more logical structure, which can help SQL Query Optimization: Boost Database Performance Now by finding more efficient execution plans. The absence of repeating groups and transitive dependencies makes the data model more predictable.

Potential Drawbacks for Performance and Scalability:

-

Increased Read Performance Overhead (Joins): The primary drawback is that retrieving comprehensive information often requires joining multiple tables. Each join operation adds computational overhead, especially for complex queries that involve many tables or large datasets. For read-heavy applications, this can lead to slower query response times.

-

More Complex Queries: Writing queries for a highly normalized database can be more complex, requiring more joins and potentially intricate subqueries. This can increase development time and make queries harder to debug and optimize.

-

Increased Indexing Needs: While normalization reduces redundancy, the increased number of tables often necessitates a well-planned indexing strategy for foreign keys and frequently queried columns to mitigate the performance impact of joins. Without proper indexing, joins can become exceedingly slow.

-

Denormalization for Analytics: For analytical workloads (OLAP, data warehousing), the overhead of joining highly normalized tables frequently for aggregations and complex reporting often makes denormalization a necessary step to achieve acceptable performance. This implies a separate, often denormalized, data model for analytics.

In conclusion, a correctly normalized database provides a strong foundation for data integrity and efficient write operations, which are critical for transactional systems. However, designers must be acutely aware of the potential for read performance degradation due to extensive joins. The key to successful database design lies in understanding these trade-offs and applying normalization judiciously, often combining it with strategic denormalization or performance tuning techniques like indexing and materialized views to achieve optimal performance for specific workloads and ensure long-term scalability.

Frequently Asked Questions

Q: Why is database normalization important?

A: Normalization is crucial for reducing data redundancy and improving data integrity. It helps prevent anomalies during data updates, insertions, and deletions, ensuring consistency and accuracy across the database.

Q: What is the main difference between normalization and denormalization?

A: Normalization aims to eliminate redundancy and improve data integrity, typically leading to more tables and joins. Denormalization intentionally adds redundancy to improve query performance, often by reducing the number of joins needed for read-heavy operations.

Q: Which normal form is usually sufficient for practical database design?

A: For most transactional (OLTP) systems, Third Normal Form (3NF) or Boyce-Codd Normal Form (BCNF) are considered sufficient. Higher normal forms are rarely implemented due to increasing complexity and diminishing practical benefits.