Docker Compose: Orchestrating Multi-Container Apps for Devs

In the dynamic landscape of modern software development, applications rarely exist as monolithic, self-contained units. Instead, they are often a constellation of interconnected services—a database, a backend API, a frontend client, a caching layer, and perhaps a message queue. Managing these disparate components, especially during local development or in a testing environment, can quickly become an arduous task. This is where Docker Compose: Orchestrating Multi-Container Apps steps in, offering a streamlined, declarative approach for defining and running complex multi-service Docker applications. For developers building intricate systems, understanding how to effectively orchestrate these multi-container apps is paramount for efficiency and consistency across different stages of the development lifecycle. It empowers developers to define their entire application stack within a single, version-controlled file, ensuring that "it works on my machine" translates seamlessly to "it works everywhere."

- Docker Compose: Orchestrating Multi-Container Apps - Simplification Explained

- The docker-compose.yml File: Your Application Blueprint

- How Docker Compose Works: Under the Hood

- Key Features and Commands of Docker Compose

- Real-World Applications and Use Cases

- Advanced Docker Compose Concepts

- Benefits and Potential Drawbacks of Docker Compose

- Future Outlook and Alternatives

- Conclusion

- Frequently Asked Questions

- Further Reading & Resources

Docker Compose: Orchestrating Multi-Container Apps - Simplification Explained

Before delving into the intricacies of Docker Compose, it's essential to grasp the fundamental problem it solves. Docker revolutionized how we package and run applications by introducing containers—lightweight, isolated environments that encapsulate an application and its dependencies. While a single Docker container is excellent for isolating one service, real-world applications often comprise several services that need to interact. Consider a typical web application: it might need a web server (e.g., Nginx), an application server (e.g., Node.js, Python Flask), and a database (e.g., PostgreSQL, MongoDB). Each of these components ideally runs in its own container for isolation and scalability.

The Challenge of Multi-Container Setups

Manually managing multiple Docker containers can quickly become cumbersome. Starting each container individually, linking them, configuring their networks, and ensuring they have access to necessary volumes involves a sequence of commands that are prone to error and difficult to replicate consistently. For instance, you might need to run docker run -p 80:80 -d --name web nginx, then docker run -d --name app --link web app-image, and then docker run -d --name db db-image. This process becomes exponentially more complex with more services, environment variables, and network configurations. Developers spend valuable time on setup rather than coding, and inconsistencies arise when different team members or environments configure things slightly differently.

Docker Compose to the Rescue

Docker Compose addresses this challenge head-on by providing a tool for defining and running multi-container Docker applications. Instead of a series of imperative docker commands, Compose uses a declarative YAML file—typically named docker-compose.yml—to describe the entire application stack. This file specifies all the services, networks, and volumes required for the application. With a single command, docker-compose up, Compose reads this file, creates the necessary Docker images (if not already built or pulled), starts all specified containers, and configures their interconnections. It transforms a complex manual process into a simple, repeatable, and version-controlled operation. This dramatically simplifies the development workflow, enhances collaboration, and ensures environmental consistency.

The docker-compose.yml File: Your Application Blueprint

The heart of Docker Compose is the docker-compose.yml file. This YAML configuration file acts as the blueprint for your multi-container application, meticulously defining every service, network, and volume needed. It's designed to be human-readable and version-controlled, allowing your entire application stack to be managed as code. Understanding its structure and key elements is fundamental to mastering Docker Compose.

The file starts with a version key, indicating the Compose file format version, which influences available features and syntax. For modern applications, version: '3.8' or higher is commonly used.

version: '3.8'

services:

web:

build: .

ports:

- "80:80"

volumes:

- .:/code

depends_on:

- backend

backend:

image: my-backend-app:latest

environment:

DATABASE_URL: postgres://user:password@db:5432/mydatabase

depends_on:

- db

db:

image: postgres:13

environment:

POSTGRES_DB: mydatabase

POSTGRES_USER: user

POSTGRES_PASSWORD: password

volumes:

db_data:

networks:

app_net:

driver: bridge

Services

The services section is the most crucial part of the docker-compose.yml file. Each key under services represents a containerized service within your application. For each service, you define how Docker should build or pull its image, how it should run, and how it interacts with other services.

Key Service Directives:

image: Specifies the Docker image to use (e.g.,nginx:latest,postgres:13). If an image is not found locally, Docker Compose will attempt to pull it from a registry like Docker Hub.build: Instead of pulling an image, this directive tells Compose to build an image from aDockerfile. You can provide the path to the directory containing theDockerfile(.) or a relative path (./my-app).context: Specifies the path to the directory containing theDockerfile.dockerfile: Specifies the name of the Dockerfile (default isDockerfile).args: Build arguments passed to theDockerfile.

ports: Maps ports between the host machine and the container. Format:"HOST_PORT:CONTAINER_PORT". For example,"80:80"maps host port 80 to container port 80.volumes: Mounts host paths or named volumes into the container. This is crucial for data persistence and code synchronization. Format:"HOST_PATH:CONTAINER_PATH"or"VOLUME_NAME:CONTAINER_PATH".environment: Sets environment variables inside the container. This is commonly used for database credentials, API keys, or application-specific configurations.networks: Connects a service to specific networks defined in thenetworkssection. If not specified, services are connected to a default network.depends_on: Declares dependencies between services. While this doesn't guarantee a service is fully "ready," it ensures that dependent services are started in the correct order. For instance, a backend service should start after its database.restart: Defines the restart policy for the container (e.g.,always,on-failure,no).command: Overrides the default command specified in the Docker image.entrypoint: Overrides the default entrypoint specified in the Docker image.

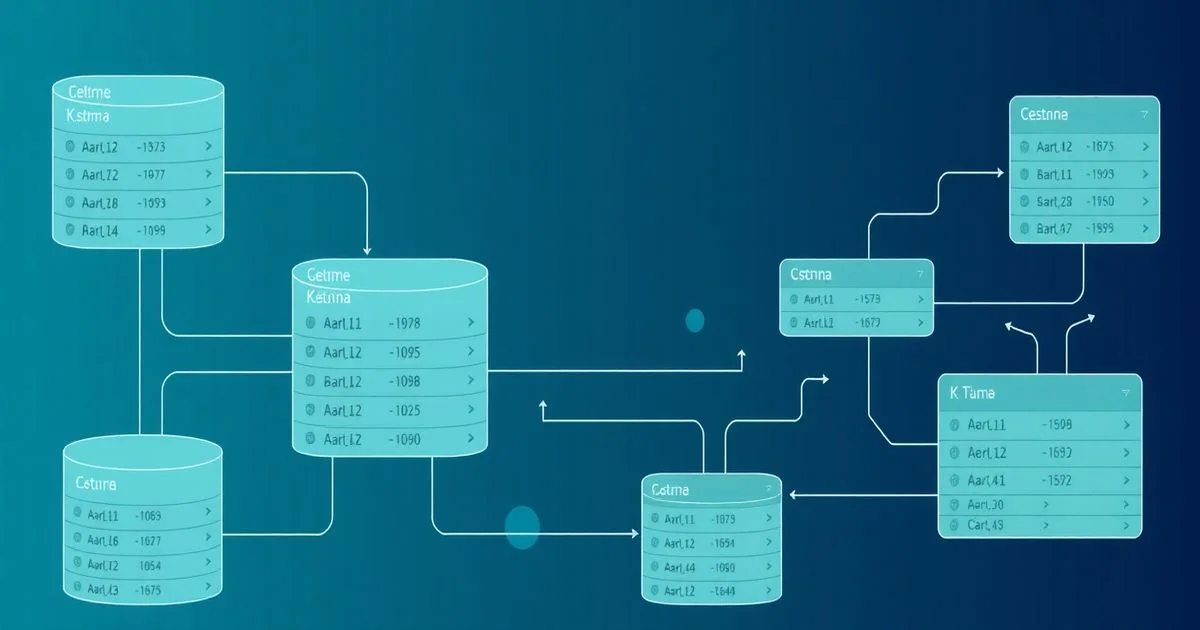

Networks

Networks in Docker Compose allow containers to communicate with each other in an isolated environment. By default, Docker Compose creates a single bridge network for your application, and all services connect to it. Services on the same network can communicate using their service names as hostnames.

You can define custom networks using the networks top-level key. This offers greater control over network topology, allowing you to isolate services or create different network segments for specific communication patterns. For example, a "frontend" network and a "backend" network, with a reverse proxy bridging them.

networks:

app_frontend:

driver: bridge

app_backend:

driver: bridge

You would then assign services to these networks under their respective networks keys:

services:

nginx:

networks:

- app_frontend

- app_backend # Nginx needs to talk to both

backend:

networks:

- app_backend

Volumes

Volumes are essential for persistent data storage and for sharing code between your host machine and containers. Without volumes, any data written inside a container is lost when the container is removed. Docker Compose supports two main types of volumes:

-

Named Volumes: Defined at the top-level

volumeskey, these are managed by Docker and stored in a specific location on the host machine. They are ideal for database data or application logs that need to persist beyond the lifecycle of individual containers. ```yaml volumes: db_data: services: db: volumes:- db_data:/var/lib/postgresql/data```

-

Bind Mounts: These mount a file or directory from the host machine directly into a container. They are commonly used during development to sync code changes instantly without rebuilding images. ```yaml services: web: volumes:

- .:/app # Mounts the current directory into /app inside the container```

Environment Variables & Secrets

Environment variables provide a flexible way to configure services without modifying their images. They can be set directly in the docker-compose.yml file using the environment directive or loaded from an external .env file (which is excellent for managing sensitive data or environment-specific configurations).

services:

backend:

environment:

FLASK_ENV: development

DEBUG: 'True'

DATABASE_URL: ${DB_URL} # Variable loaded from .env file

For more sensitive information like API keys or database passwords, Docker also offers a secrets mechanism, which is more robust for production environments and can be integrated with Compose, although it's more commonly used with Docker Swarm or Kubernetes.

Dependencies and Startup Order

The depends_on directive, while useful for indicating service startup order, has a critical nuance: it only ensures that the dependency container has started, not that the application inside the container is ready to accept connections. For robust applications, especially in CI/CD or production, you might need more sophisticated health checks or wait-for-it scripts to ensure services are fully operational before dependents attempt to connect. However, for local development, depends_on is often sufficient for basic ordering.

For example, ensuring a database container is launched before the application container that tries to connect to it:

services:

backend:

depends_on:

- db

db:

# ...

By carefully defining these elements in your docker-compose.yml, you create a powerful, self-documenting, and reproducible environment for your multi-container applications.

How Docker Compose Works: Under the Hood

Understanding the docker-compose.yml file is crucial, but equally important is knowing what happens when you execute docker-compose up. Docker Compose orchestrates a series of steps to bring your application stack to life, involving image management, network configuration, and volume provisioning.

The Build Process

When you run docker-compose up, Compose first inspects each service definition. If a service specifies a build instruction, Compose navigates to the specified context directory and executes docker build using the Dockerfile found there. This process constructs a Docker image for that service. If an image directive is used, Compose checks if the specified image exists locally. If not, it pulls the image from a configured Docker registry (e.g., Docker Hub). This ensures that all necessary component images are available before container instantiation.

Network Management

Docker Compose automatically creates a default network for your project (named after the directory where your docker-compose.yml file resides, e.g., myproject_default). All services defined in your docker-compose.yml are connected to this network unless explicitly configured otherwise. This network is a bridge network, allowing containers to communicate with each other using their service names as DNS hostnames. For example, if you have a service named db, your backend service can connect to the database simply by using db as the hostname.

If you define custom networks in the networks top-level section, Compose creates those specific networks and connects services to them as specified. This granular control over networking is vital for isolating different parts of your application or for more complex network topologies.

Volume Management

Compose manages two types of volumes: bind mounts and named volumes.

- Bind Mounts: When a bind mount is specified (e.g.,

./code:/app), Docker Compose instructs the Docker daemon to mount the host directory directly into the container. This is a direct reference and allows for real-time file synchronization, making it ideal for development where code changes on the host need to be immediately reflected in the container. - Named Volumes: For named volumes (e.g.,

db_data:/var/lib/postgresql/data), Compose creates and manages these volumes. If a named volume doesn't exist, Docker creates it on the host machine in a Docker-managed location. This provides a more robust and portable way to persist data, as the data volume's lifecycle can be independent of individual containers.

Orchestration Lifecycle

Once images are built or pulled, networks are set up, and volumes are prepared, Compose begins launching containers in the specified order (if depends_on is used).

- Container Creation: For each service, Compose creates a new container instance.

- Resource Allocation: It allocates resources, applies port mappings, sets environment variables, and mounts volumes as defined in the

docker-compose.yml. - Command Execution: The container's entrypoint and command are executed, starting the application process inside the container.

- Health Checks (Optional): If health checks are configured, Docker monitors the health of the application within the container.

- Logging: All container logs are aggregated and can be viewed via

docker-compose logs.

When you run docker-compose down, Compose reverses this process: it stops and removes all containers, networks, and (optionally) volumes associated with the project, effectively cleaning up the entire application stack. This complete and isolated lifecycle management is one of Compose's most powerful features for consistent development and testing environments.

Key Features and Commands of Docker Compose

Docker Compose provides a rich set of commands and features designed to manage your multi-container applications efficiently. Beyond simply bringing up your stack, these tools enable you to inspect, scale, and clean up your environments.

docker-compose up

This is the most fundamental command. It builds (if necessary), creates, starts, and attaches to containers for all services defined in your docker-compose.yml file.

docker-compose up: Starts all services in detached mode (background) and streams their logs to the console. If you omit the-dflag, it runs in foreground mode, attaching your terminal to the output of all containers.docker-compose up -d: Starts services in detached mode, meaning the containers run in the background, and your terminal is freed.docker-compose up --build: Forces a rebuild of images that have abuildinstruction, even if they haven't changed. Useful for ensuring the latest code changes are incorporated into your images.

docker-compose down

This command gracefully stops and removes containers, networks, and optionally volumes created by docker-compose up.

docker-compose down: Stops and removes containers and default networks.docker-compose down --volumesordocker-compose down -v: Also removes named volumes associated with the project, which is crucial for a clean slate, especially during development. Be cautious with production data.docker-compose down --rmi all: Removes all images used by any service, not just the ones built by your project.

docker-compose build

Explicitly builds or rebuilds images for services that have a build instruction. This is useful for pre-building images before deployment or for debugging build issues.

docker-compose build: Builds all services defined in thedocker-compose.ymlthat have abuildinstruction.docker-compose build <service_name>: Builds only the image for a specific service.

docker-compose ps

Lists all running services, showing their current state (running, stopped, exited), exposed ports, and command. It provides a quick overview of your application's health.

$ docker-compose ps

Name Command State Ports

----------------------------------------------------------------------------------

myproject_backend_1 python app.py Up 5000/tcp

myproject_db_1 docker-entrypoint.sh ... Up 5432/tcp

myproject_web_1 nginx -g 'daemon off;' Up 0.0.0.0:80->80/tcp

docker-compose logs

Displays log output from services. This is invaluable for debugging and monitoring.

docker-compose logs: Shows logs from all services.docker-compose logs -f: Follows log output in real-time.docker-compose logs <service_name>: Shows logs for a specific service.docker-compose logs --tail 100: Shows the last 100 lines of logs.

Scalability with scale

Compose allows you to scale up services by running multiple instances of a container. While not a full-fledged orchestrator like Kubernetes, it's useful for simple load distribution in development or testing.

docker-compose up --scale web=3 backend=2: Starts three instances of thewebservice and two instances of thebackendservice.

Profiles for Different Environments

Introduced in Compose file format 3.4, profiles allow you to define services that are only started under specific conditions. This is powerful for managing different development, testing, or production configurations within a single docker-compose.yml file.

version: '3.8'

services:

web:

build: .

ports:

- "80:80"

profiles: ["frontend"] # This service belongs to the 'frontend' profile

backend:

image: my-backend-app:latest

profiles: ["backend", "dev"]

db:

image: postgres:13

profiles: ["backend", "test"] # DB for backend and also for testing purposes

To use a profile: docker-compose --profile frontend up -d. You can activate multiple profiles: docker-compose --profile backend --profile dev up -d. This provides immense flexibility for dynamic environment setup.

These commands form the core toolkit for anyone working with Docker Compose, enabling efficient management and interaction with multi-container applications throughout their lifecycle.

Real-World Applications and Use Cases

Docker Compose isn't just a theoretical tool; it's a workhorse in various development and operational scenarios. Its simplicity and power make it incredibly versatile for tasks ranging from local development to CI/CD pipelines.

Local Development Environments

One of the most common and impactful use cases for Docker Compose is creating reproducible local development environments. Developers often struggle with dependency management, conflicting library versions, and the dreaded "it works on my machine" syndrome. Compose eliminates these issues by encapsulating the entire application stack—databases, caches, message queues, and application services—within isolated containers.

Imagine a full-stack developer working on an application that uses Node.js for the frontend, Python/Django for the backend, PostgreSQL as the database, and Redis for caching. Without Docker Compose, they would need to install Node.js, Python, PostgreSQL, and Redis locally, manage their versions, and configure them to work together. With Compose, all these components are defined in a docker-compose.yml file. A simple docker-compose up command brings the entire environment to life, ensuring every team member works with identical configurations. This consistency significantly reduces setup time and environment-related bugs.

Testing and CI/CD Pipelines

Docker Compose shines in automated testing and Continuous Integration/Continuous Deployment (CI/CD) pipelines. In a CI environment, you need a clean, isolated, and consistent environment to run tests for every code commit. Manually provisioning databases or spinning up dependent services for each test run is inefficient and slow.

With Docker Compose, your CI server can use the same docker-compose.yml file to quickly spin up the entire application stack (or a subset relevant for testing) for integration and end-to-end tests. After tests complete, docker-compose down tears down the environment, leaving no artifacts behind. This ensures that tests are run in a pristine state every time, preventing contamination from previous runs. Tools like GitLab CI, Jenkins, and GitHub Actions readily integrate with Docker Compose for this purpose, enabling robust and reliable automated testing.

Microservices Architectures

While full-scale microservices deployments often leverage orchestrators like Kubernetes, Docker Compose is an excellent tool for developing and testing individual microservices or small groups of interconnected services locally. In a microservices architecture, you might have dozens of small, independent services. Building and testing a single microservice that depends on a database, a message broker, and perhaps another service can be cumbersome. For complex microservice interactions, exploring patterns like the Circuit Breaker Pattern can also be beneficial for resilience.

Docker Compose allows developers to define a docker-compose.yml file for each microservice (or a logical grouping), encompassing the microservice itself and all its immediate dependencies. This enables developers to focus on developing and testing one microservice at a time, without needing to run the entire sprawling microservices ecosystem. It promotes a modular development approach and simplifies the debugging process for specific service interactions.

Proof-of-Concept Deployments

For quickly demonstrating an idea or building a proof-of-concept (PoC), Docker Compose is invaluable. When you need to showcase an application that requires multiple services but doesn't yet warrant a full-fledged Kubernetes deployment, Compose offers the perfect lightweight solution.

A startup might use Compose to bundle their entire application (frontend, backend, database) into a single, easily deployable unit for investor demonstrations or early user testing. Universities and researchers often use Compose to package complex scientific applications with their dependencies, making them easy to share and reproduce across different machines without worrying about environmental setup. This rapid deployment capability drastically speeds up the initial phases of project development and validation.

Data Science and Machine Learning Workflows

Data scientists and ML engineers often work with environments that require specific versions of libraries (e.g., TensorFlow, PyTorch, Pandas, Scikit-learn), specific Python versions, and sometimes specialized hardware drivers. These environments can be notoriously difficult to set up consistently across different machines.

Docker Compose can define a data science workflow where a Jupyter Notebook server runs in one container, a database (e.g., PostgreSQL or MongoDB for data storage) runs in another, and perhaps a specialized ML model serving container runs in a third. This allows data scientists to ensure their experiments are reproducible and portable. They can share their docker-compose.yml and Dockerfiles, guaranteeing that anyone can spin up the exact same computational environment to replicate results. This capability is critical for collaboration and for moving models from research to deployment.

In summary, Docker Compose bridges the gap between individual containerization and full-blown orchestration, providing an indispensable tool for simplifying multi-container application management across various stages of the software development lifecycle.

Advanced Docker Compose Concepts

Beyond the basic setup, Docker Compose offers several advanced features that enhance flexibility, robustness, and environment management for complex applications. Leveraging these can significantly streamline your development and deployment workflows.

Extending Services with docker-compose.override.yml

A powerful feature of Docker Compose is the ability to extend and override configurations. You can have a base docker-compose.yml file that defines your common services and then create environment-specific override files, such as docker-compose.override.yml or docker-compose.prod.yml, to modify or add configurations.

When you run docker-compose up, Compose automatically looks for docker-compose.yml and then docker-compose.override.yml (if it exists) in the same directory, merging their configurations. Services defined in the override file will extend or replace properties of services with the same name in the base file.

Example:

docker-compose.yml (Base configuration)

version: '3.8'

services:

backend:

build: .

ports:

- "5000:5000"

environment:

FLASK_ENV: production

DEBUG: 'False'

db:

image: postgres:13

docker-compose.override.yml (Development-specific overrides)

version: '3.8'

services:

backend:

ports:

- "5001:5000" # Use a different host port for dev

volumes:

- .:/app # Bind mount for live code changes

environment:

FLASK_ENV: development

DEBUG: 'True' # Enable debug mode in dev

db:

ports:

- "5433:5432" # Use a different host port for dev DB

When you run docker-compose up, the backend service will run on host port 5001, enable debug mode, and bind mount the local directory, while the db service will be accessible on 5433. This pattern is excellent for maintaining a clean separation between development, testing, and production settings. You can also specify multiple Compose files explicitly: docker-compose -f docker-compose.yml -f docker-compose.prod.yml up.

Health Checks for Robustness

While depends_on helps with startup order, it doesn't guarantee that a service inside a container is fully operational. A database container might be "up," but the database server within it might still be initializing. Health checks provide a way for Docker to determine if a container's services are actually ready and responsive.

You can define a healthcheck directive for each service:

services:

db:

image: postgres:13

healthcheck:

test: ["CMD-SHELL", "pg_isready -U postgres"]

interval: 5s

timeout: 5s

retries: 5

Here, Docker will periodically run pg_isready inside the db container. If it fails too many times, the container is marked as "unhealthy." While depends_on does not automatically wait for health checks to pass, you can integrate external scripts or use specific orchestrators that respect health checks for more intelligent dependency management. For local development, this provides a clear indicator of a service's readiness.

Resource Constraints and Limits

For development and testing environments, you might want to limit the CPU and memory resources available to your containers to simulate production constraints or prevent a misbehaving service from consuming all host resources.

services:

backend:

image: my-backend-app:latest

deploy:

resources:

limits:

cpus: '0.5' # Limit to 50% of one CPU core

memory: 512M # Limit to 512MB RAM

reservations:

cpus: '0.25' # Reserve 25% of one CPU core

memory: 128M # Reserve 128MB RAM

The deploy key and its sub-keys are primarily intended for use with Docker Swarm mode (or Kubernetes when docker stack deploy is used), but cpu_shares, cpu_quota, and mem_limit can be used directly under a service definition for non-Swarm Compose deployments. Using deploy syntax generally ensures forward compatibility.

Using with Docker Swarm

While Docker Compose is primarily a local development tool, its YAML file format is largely compatible with Docker Swarm. Docker Swarm is Docker's native orchestration solution for managing a cluster of Docker nodes. You can deploy a Compose file to a Swarm cluster using the docker stack deploy command:

docker stack deploy -c docker-compose.yml myappstack

This command treats your docker-compose.yml file as a "stack" definition, deploying services, networks, and volumes across the Swarm cluster. This allows you to scale services, manage rolling updates, and achieve higher availability. However, for advanced production orchestration with features like auto-scaling, self-healing, and declarative updates, Kubernetes remains the industry standard, and Compose files would typically be translated into Kubernetes manifests. Despite this, Compose's integration with Swarm offers a smooth transition path for those who wish to leverage Docker's native clustering capabilities without the complexity of Kubernetes.

By mastering these advanced concepts, developers can unlock the full potential of Docker Compose, creating highly flexible, resilient, and efficiently managed multi-container application environments.

Benefits and Potential Drawbacks of Docker Compose

Like any powerful tool, Docker Compose offers significant advantages but also comes with certain limitations that dictate its optimal use cases. Understanding these aspects is key to making informed architectural decisions.

Advantages: Simplicity, Portability, Consistency

1. Simplified Development Workflow:

Docker Compose dramatically simplifies the setup and teardown of complex development environments. Instead of managing multiple docker run commands, network configurations, and volume mounts manually, developers can spin up an entire application stack with a single docker-compose up command. This reduces onboarding time for new team members and minimizes "it works on my machine, but not yours" scenarios. A study by IBM found that containerization, often enabled by tools like Compose, can reduce application deployment time by up to 50% in development environments.

2. Portability Across Environments:

The docker-compose.yml file serves as a single source of truth for your application's architecture. This YAML file can be committed to version control and shared across different development machines, testing environments, and even some production setups (especially for smaller applications or staging). This ensures that the application behaves consistently, regardless of the underlying host operating system or specific configurations. This level of portability is invaluable for distributed teams and consistent CI/CD pipelines.

3. Consistency and Reproducibility:

By defining all services, networks, and volumes declaratively in a YAML file, Docker Compose guarantees a high degree of consistency and reproducibility. Every time docker-compose up is run, it attempts to bring up the environment exactly as described in the file. This eliminates configuration drift and ensures that tests are run against the same environment every time, and developers encounter fewer environment-specific bugs.

4. Isolation of Services:

Each service runs in its own container, providing excellent isolation. This means that dependencies for one service (e.g., a specific Python version) won't conflict with dependencies for another service (e.g., a different Node.js version). This modularity enhances stability and simplifies troubleshooting.

5. Cost-Effectiveness for Small Deployments:

For small to medium-sized applications, proof-of-concepts, or local development, Docker Compose is extremely cost-effective. It requires minimal overhead to set up and manage, avoiding the complexity and resource demands of larger orchestration platforms like Kubernetes.

Limitations: Not for Large-Scale Production Orchestration

1. Lack of Built-in High Availability and Self-Healing:

Docker Compose itself does not provide features for high availability or self-healing. If a container fails, Compose won't automatically restart it on a different host, nor will it scale out services dynamically based on load. While you can configure restart policies, these only apply to the single host where Compose is running. For true fault tolerance and automated recovery, you need a full-fledged orchestrator.

2. Limited Scalability Features:

While docker-compose up --scale allows running multiple instances of a service on a single host, it doesn't distribute these instances across a cluster of machines. For horizontally scaling applications across multiple servers, Docker Compose is inadequate. Modern applications often require the ability to dynamically scale services up or down based on traffic, which is beyond Compose's scope.

3. Single-Host Scope:

Docker Compose is fundamentally designed for managing applications on a single Docker host. While it can integrate with Docker Swarm for multi-host deployments, it doesn't offer the advanced cluster management, scheduling, and resource allocation capabilities found in more mature orchestration platforms like Kubernetes.

4. Complex Production-Grade Features Missing:

Features critical for production, such as advanced load balancing, service discovery (beyond simple DNS names within its network), secret management (more robust than .env files), automated rolling updates with rollbacks, and advanced monitoring, are either absent or very basic in Docker Compose. Implementing these robustly would require significant manual scripting or external tools.

5. Not a Replacement for Kubernetes:

It's crucial to understand that Docker Compose is not a competitor or replacement for Kubernetes. Instead, they serve different purposes. Compose is a fantastic tool for local development and single-host environments, simplifying the definition of multi-container applications. Kubernetes is a robust platform for deploying, scaling, and managing containerized applications across a cluster of nodes in production. Often, developers use Compose locally and then translate their application definitions to Kubernetes manifests for production deployment.

In summary, Docker Compose excels at simplifying multi-container application management in development and testing contexts. It offers unparalleled ease of use and consistency. However, for applications requiring high availability, extensive scalability, and sophisticated production-grade features across a cluster of machines, a dedicated orchestrator like Kubernetes is the appropriate choice.

Future Outlook and Alternatives

The landscape of container orchestration is constantly evolving, driven by the increasing complexity of modern applications and the need for greater automation and resilience. Docker Compose, while firmly established in its niche, exists within this broader ecosystem.

Evolution of Container Orchestration

When Docker first emerged, managing multiple containers was a significant challenge. Docker Compose filled a crucial gap, simplifying multi-container application definitions for single-host environments. Over time, the demand for distributing these applications across multiple hosts, ensuring high availability, and automating operational tasks led to the rise of dedicated orchestrators.

Docker itself attempted to address this with Docker Swarm, offering a native orchestration solution integrated directly into the Docker engine. For simple clustering needs, Swarm provides a more straightforward setup than its main competitor. However, the ecosystem has largely converged around a different player for large-scale, enterprise-grade orchestration.

Beyond Compose: Kubernetes and Nomad

Kubernetes: Without a doubt, Kubernetes has emerged as the dominant force in container orchestration. Developed by Google and now an open-source project managed by the Cloud Native Computing Foundation (CNCF), Kubernetes provides a powerful, extensible platform for automating the deployment, scaling, and management of containerized applications. It offers advanced features like self-healing, horizontal auto-scaling, intelligent scheduling, declarative configuration, secret management, and robust networking. While it has a steeper learning curve than Docker Compose, its capabilities are unmatched for production-grade, distributed systems. Many companies adopt a strategy where developers use Docker Compose for local development and then convert their docker-compose.yml definitions into Kubernetes manifests for deployment to production clusters. Tools like Kompose can assist in this translation, although manual refinement is often necessary.

Nomad: HashiCorp Nomad is another strong contender in the orchestration space, often chosen for its simplicity and flexibility compared to Kubernetes. Nomad is a lightweight, flexible workload orchestrator that can manage containers, virtual machines, and other non-containerized applications. It integrates well with other HashiCorp tools like Consul (for service discovery) and Vault (for secret management). For teams looking for a less complex alternative to Kubernetes that still offers multi-host scheduling and high availability, Nomad presents a compelling option, particularly for mixed workload environments.

Cloud-Native Services: Beyond self-managed orchestrators, major cloud providers offer their own managed container services. AWS provides Elastic Kubernetes Service (EKS) and Elastic Container Service (ECS), Google Cloud offers Google Kubernetes Engine (GKE), and Microsoft Azure has Azure Kubernetes Service (AKS). These services abstract away much of the operational overhead of running Kubernetes clusters, making it easier for organizations to adopt container orchestration without deep expertise in managing the underlying infrastructure. They often integrate seamlessly with other cloud services, providing a comprehensive platform for modern application deployment.

Docker Compose will likely continue to thrive as the go-to tool for local development, rapid prototyping, and managing smaller, single-host multi-container applications. Its ease of use and simplicity ensure its place in the developer's toolkit. However, as applications scale and demand higher levels of resilience and automation in production, developers will increasingly look to Kubernetes, Nomad, or cloud-native managed services to meet those advanced orchestration needs. The key is to understand the strengths of each tool and choose the right one for the specific stage and scale of your application.

Conclusion

In the fast-paced world of software development, managing complex application architectures is a persistent challenge. Docker Compose: Orchestrating Multi-Container Apps has emerged as an indispensable tool, simplifying the definition and management of multi-service applications for developers worldwide. By encapsulating an entire application stack within a single, version-controlled docker-compose.yml file, it addresses critical issues of environmental consistency, reproducibility, and developer efficiency.

From streamlining local development workflows and enabling robust CI/CD pipelines to facilitating microservices development and rapid proof-of-concept deployments, Docker Compose offers a pragmatic and powerful solution. While it excels in these areas, its single-host scope and lack of advanced orchestration features mean that for large-scale, production-grade deployments requiring high availability and dynamic scaling, solutions like Kubernetes or HashiCorp Nomad are more suitable. Nevertheless, Docker Compose remains a foundational component in the containerization ecosystem, serving as the essential first step for developers embarking on their journey with multi-container applications. Its continued evolution, often in conjunction with these larger orchestrators, underscores its enduring value in modern software engineering.

Frequently Asked Questions

Q: What is the primary purpose of Docker Compose?

A: Docker Compose simplifies the process of defining and running multi-container Docker applications. It allows developers to configure an entire application stack, including services, networks, and volumes, within a single YAML file, enabling consistent and reproducible environments.

Q: How does docker-compose.yml differ from a Dockerfile?

A: A Dockerfile defines how to build a single Docker image for one service, specifying its dependencies and setup. In contrast, docker-compose.yml defines how multiple services (each potentially based on its own Dockerfile or a pre-built image) interact to form a complete application, including networking and volume configurations.

Q: Is Docker Compose suitable for production deployments?

A: Docker Compose is excellent for local development, testing, and smaller-scale single-host deployments. However, for large-scale production environments requiring high availability, dynamic scaling, and advanced operational features across multiple machines, a dedicated orchestrator like Kubernetes or Docker Swarm is generally recommended.